The cloud of the 2020s was mostly a passive repository, a collection of remote data centers built primarily for basic compute and storage. But as we enter the next decade, that paradigm has fundamentally shifted. The 2030 cloud is no longer just a place to park big data, it has evolved into a dynamic, self-aware ecosystem.

We are now moving from static cloud infrastructures to an interconnected intelligent fabric that extends from centralized data centers to the far edges of Edge Computing for AI. This rapid change is being driven by 3 key factors:

- Absolute Autonomy: Autonomous systems can now self-repair and automatically redeploy to perform optimally without human assistance.

- Pervasive Artificial Intelligence: All levels of the infrastructure contain embedded AI, powered by advanced artificial intelligence and machine learning capabilities. Moreover, AI changes the nature of raw compute power fully enabling organizations to forecast trends through the use of large datasets to manage complex, global supply chains and smart cities.

- Strict Carbon Neutrality: As global computing demands skyrocket, the push for sustainability has forced this entire ecosystem to become completely carbon-neutral.

Today, the fundamental question isn't just where our data lives, but how independently, intelligently, and sustainably it operates.

In this article, we will cover:

- Next-Gen Cloud Infrastructure: The shift from centralized data silos to a fluid edge computing continuum.

- AI-Driven Architecture: How legacy servers are transforming into purpose-built AI factories.

- The Autonomous Shift: The leap from rule-based automation to intent-based, self-healing IT operations.

- AI-Optimized Efficiency: Leveraging predictive analytics and FinOps to maximize ROI.

- Sustainable Computing: Strategies for achieving a truly carbon-neutral data footprint.

The Dawn of Next-Generation Cloud Services

The transition to Generation 3 cloud services marks a clear departure from static, centralized legacy models. Instead of being locked into rigid data silos, this new era introduces a fluid, hyper-connected hybrid architecture. It is built to dynamically distribute workloads instantly to wherever compute is most efficient.

Key Cloud Technology Trends Driving Innovation

Beyond cognitive models, massive structural networking shifts are redefining the cloud boundary. Here are the core developments shaping this new landscape:

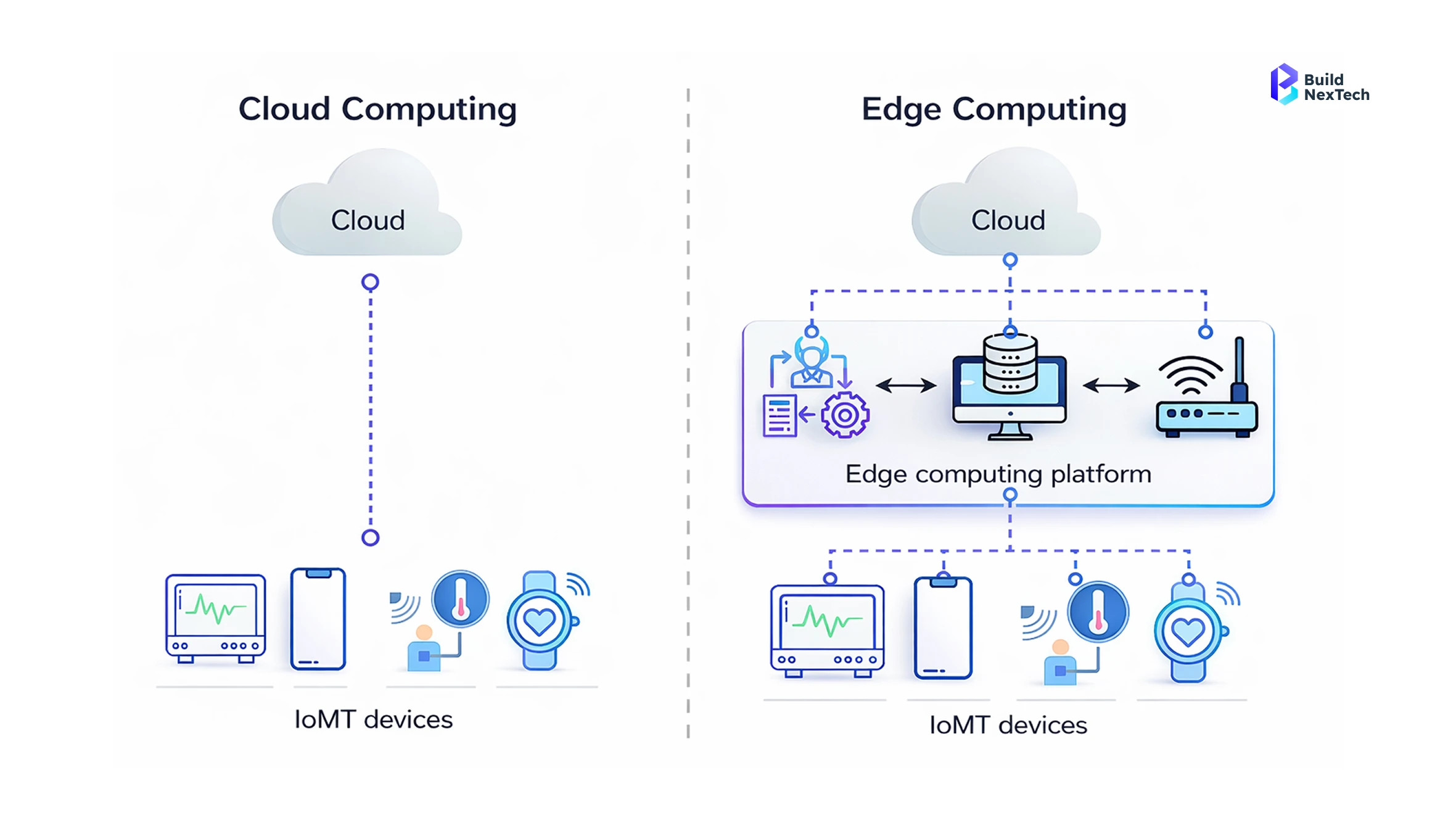

- The Edge Computing Continuum: The fusion of the core cloud and pervasive edge computing enables a seamless loop that shifts computation to the source of data generation itself.

- Next-Gen Connectivity: Enabled by ultra-fast 5G and 6G networks in the near future, this structural shift ensures ultra-low latency that is a prerequisite for any mission-critical application.

- Future-Proof Security: Enabled by the proliferation of sensor networks and edge devices, future-proof security is about ensuring that data in transit is secure from the post-quantum decryption capabilities that future generations of quantum computing promise to deliver.

Designing the Infrastructure for Intelligent Cloud Evolution

At the physical layer, legacy monolithic servers are rapidly being replaced by highly composable infrastructure. This hardware evolution is defined by a few key advancements:

- Dynamic Resource Pooling: Using high-speed interconnects like Compute Express Link (CXL) and PCIe, resources such as memory, storage, and compute are disaggregated and dynamically pooled on demand.

- Specialized Processing: This architectural shift is accelerated by Data Processing Units (DPUs) and specialized hardware designed to efficiently offload networking and security tasks from the main CPU.

- Advanced Thermal Management: Whether deployed in massive hyperscale facilities or as ruggedized edge technology near local devices, these dense configurations generate immense heat. This reality is driving the industry-wide adoption of advanced liquid cooling systems to keep everything running efficiently.

The Rise of AI-Driven Cloud Computing

To support the immense computational weight of modern AI models and large-scale Generative AI models, hyperscalers are physically tearing down and rebuilding their data centers. The standard compute rack is now obsolete. Instead, today’s facilities have transformed into purpose-built AI factories engineered exclusively to feed ravenous deep learning algorithms.

Architecting the Modern AI Cloud Platform

Training state-of-the-art foundational models on a modern AI cloud platform needs more than just traditional TPU/CPU resources it is also forcing changes to the hardware infrastructure of existing cloud service providers:

- Advanced Compute Clusters: They are architecting massive clusters of GPUs and Neural Processing Units (NPUs) connected via ultra-high-bandwidth fabrics like InfiniBand. This specialized hardware directly eliminates the communication bottlenecks that typically throttle distributed neural network training across large-scale AI infrastructure.

- Supercomputing Capabilities: As seen with platforms like Azure Machine Learning, there is increasing reliance on dense (liquid cooled) supercomputing racks to process massive, petabyte-scale datasets at once.

- Optimized Networking: By refining the physical networking layer and deploying custom silicon, the modern cloud ensures that massive parameter updates occur with zero packet loss. This maximizes accelerator utilization while drastically minimizing training times.

Seamless Cloud AI Integration for Enterprise Workloads

Software-wise, hyperscalers are actively abstracting complex infrastructure into scalable, easily managed services. Managing raw infrastructure is no longer a concern for businesses developing Generative AI and enterprise AI models.

- Streamlined Data Connections: By leveraging native RAG services, businesses can connect their own data to Large Language Models with minimal effort, as they do not need to start from scratch.

- Comprehensive Tooling: Robust environments like Azure AI Studio and broader Azure AI Services now offer serverless API gateways alongside managed vector databases.

- Production-Ready Deployment: With integrated software frameworks that are built for development purposes, support systems for deploying commercial-grade AI solutions are also available pre-installed with features bringing scalability, full-stack observability, and robust security to your company.

Transitioning to the Autonomous Cloud

The narrative is fundamentally shifting. We are no longer just building massive data centers to host and train machine learning workloads we are now empowering those very same algorithms to autonomously operate, optimize, and run the cloud itself.

From Cloud Computing Automation to Autonomous Systems

The distinction between legacy IT automation and true autonomous systems is profound. We are moving away from rigid scripts toward dynamic, intelligent operations:

- Rule-Based vs. Intent-Based: Traditional automation is strictly rule-based for instance, if CPU utilization exceeds 80%, spin up another instance. In contrast, autonomy is intent-based and dynamically driven by deep machine learning and artificial intelligence systems.

- Outcome-Driven Management: Administrators no longer micromanage instances , they simply define the desired outcome, such as maintaining sub-50ms latency at the lowest possible cost.

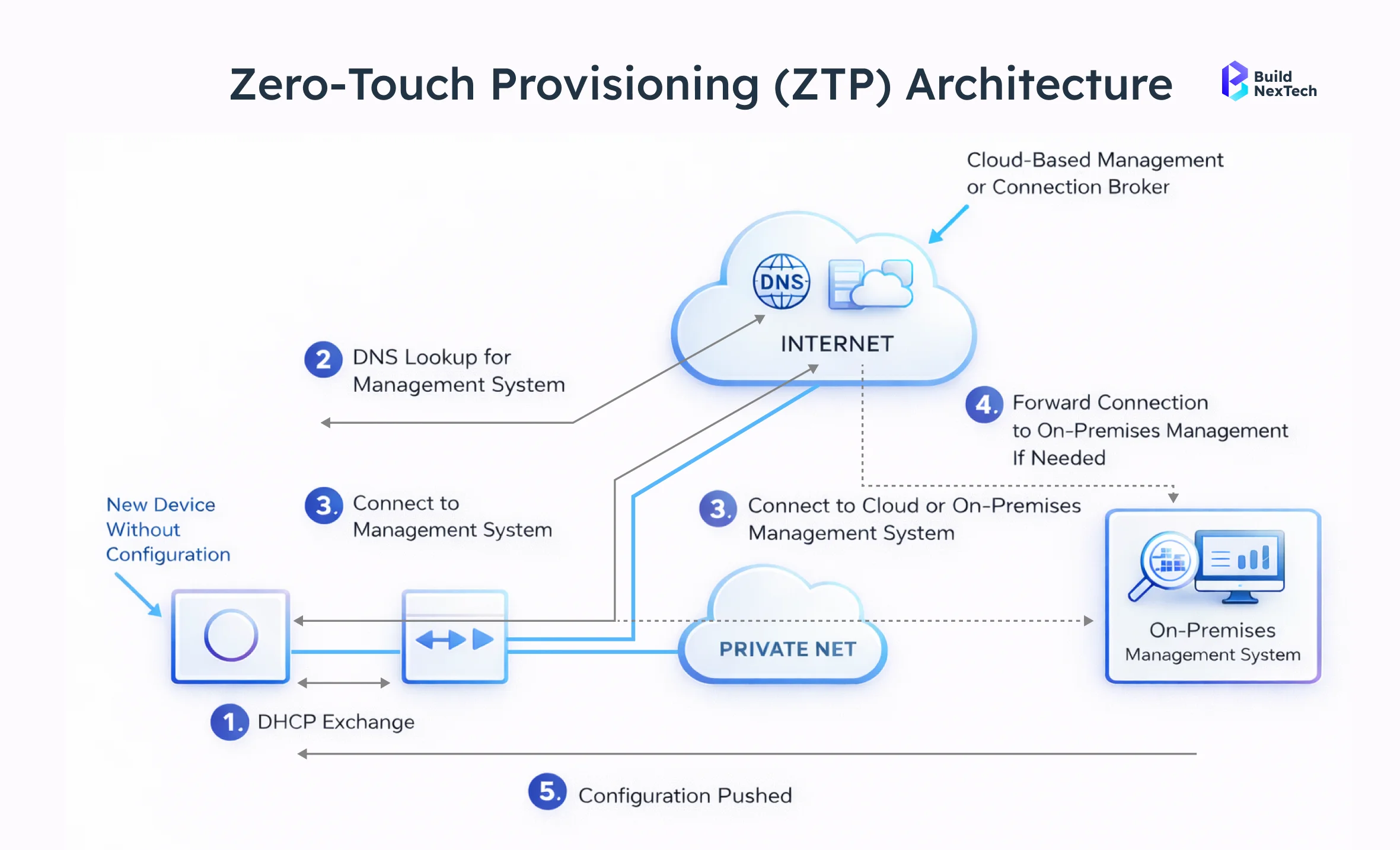

- Seamless Orchestration: From there, the system leverages "Zero-Touch Provisioning" to autonomously orchestrate resources, bypassing human bottlenecks entirely.

- Predictive Scaling: This cognitive leap allows networks to predictively manage massive pipelines of sensor data generated by the Internet of Things in sectors like smart healthcare, autonomous vehicles, and smart cities before traffic spikes even occur.

Leveraging Advanced Cloud Automation Tools for IT Ops

The industry is moving from reactive troubleshooting to proactive, silent resolution as a result of the development of AIOps, which is radically changing incident response.

- Continuous Monitoring: Current smart systems consistently monitor satellite nodes across distributed networks. Predictive Maintenance also identifies failed nodes long before something costs too much to repair.

- Automated Self-Healing: Once an anomaly is detected within an Azure Kubernetes Service cluster, the system will not only generate an alert but also take steps to put a self-repair strategy into effect right away.

- Real-Time Remediation: State-of-the-art algorithms can now create, test, and execute their own remediation scripts in real-time thereby massively reducing downtime and allowing IT groups to devote more attention to engineering resilient new hybrid environments.

Managing Autonomous Infrastructure Effectively

If self-operating cloud infrastructures do, in fact, eliminate the need for human intervention, there will be a significant shift in how IT professionals will operate. Rather than having their position replaced, they will now go from being hands-on, SysAdmin type of resources to now being strategic “Governors” of the company's IT Systems.

This new operational model reshapes day-to-day responsibilities:

- Strategic Oversight: Instead of the hands-on responsibility of configuring firewalls, load balancing and other types of configuration they will now be required to establish the broad business constraints, rules for compliance and acceptable levels of risk.

- Policy Alignment: Using robust governance platforms like Microsoft Purview, they ensure that the underlying AI models operating the infrastructure remain completely aligned with corporate policies.

- Continuous Validation: The IT Governance System provides a critical Human “in-the-loop” validation, that as the IT Cloud environment continues to grow through self-managed and operated resources, the IT Security Posture (governed under an enterprise class security solution such as Defender for Cloud) will be upheld. In effect, this structure will provide the highest level of trust across the entire business on the compliance with regulations and governmental standards.

Unlocking Cloud Efficiency with AI Optimization

The true value of a self-managing cloud isn’t merely unparalleled uptime it’s the unprecedented financial and operational efficiency it brings to the table. By continuously analyzing performance metrics, these intelligent platforms ensure that immense computational power runs leaner, faster, and significantly cheaper than legacy environments.

Strategies for Proactive Cloud Optimization

The period of reactively creating servers in response to sudden spikes in traffic has come to an end. With a modern infrastructure, we’ll use a more intelligent approach (that is looking to the future) for resource management:

- Predictive Resource Management: The system leverages proactive, predictive scaling driven by sophisticated machine learning models and AI models to anticipate needs before they happen.

- Advanced Forecasting: The cloud will be able to accurately forecast demand anomalies from large data pipelines fed into complex analytics engines such as Azure Databricks and Azure Synapse Analytics hours ahead of time.

- Seamless Pre-Warming: Instead of waiting for capacity thresholds to break, the system intelligently pre-warms instances and prepares the network fabric. This ensures zero performance degradation for end-users while strictly avoiding the massive financial overhead of permanent over-provisioning.

Utilizing Next-Gen Cloud Optimization Tools

To further maximize ROI, hyperscalers have introduced powerful autonomous FinOps capabilities that act as a dedicated financial brain for your infrastructure.

- Real-Time Cost Efficiency: Powerful artificial intelligence schedulers now perform real-time "spot instance arbitrage" to secure the best possible compute pricing.

- Intelligent Workload Routing: By leveraging unified management planes like Azure Arc, these tools dynamically migrate non-critical workloads to global regions where compute power is currently the cheapest.

- Automated Savings: This continuous, automated cost-optimization runs completely invisibly in the background. IT and finance teams simply monitor the resulting savings and efficiency metrics surfaced through highly visual dashboards in Power BI and Microsoft Power BI. This ensures the enterprise maximizes its cloud budget without ever sacrificing operational performance.

Pioneering Sustainable Cloud Computing Practices

The aggressive pursuit of operational efficiency directly accelerates global sustainability goals. The modern ecosystem requires organizations to establish energy-efficient systems which produce lower emissions through their entire operational processes. Hyperscalers demonstrate that their system performance depends on their ability to reduce computational downtime to achieve better results.

Architecting the Green Cloud: Managing Cloud Energy

Physical energy management has evolved into a highly dynamic discipline. To achieve true sustainability and advance sustainable cloud computing, facilities are implementing intelligent, real-time strategies:

- Dynamic Workload Routing: Facilities now employ grid-aware computing, utilizing advanced telemetry to autonomously migrate non-critical workloads across the globe. They target data centers currently powered by active solar or wind generation, and this geographic load-balancing ensures maximum renewable energy utilization.

- Localized Efficiency: Simultaneously, Localized innovations in edge computing, Edge AI, and edge AI processing reduce the massive energy costs of long-haul data transmission generated by the Internet of Things.

- Optimizing Facility Power: Combined with revolutionary liquid cooling, these intelligent strategies are pushing data center Power Usage Effectiveness (PUE) remarkably close to a perfect 1.0.

Strategies for Achieving a Carbon-Neutral Cloud Footprint

In addition to hardware, corporate sustainability includes thorough governance processes and comprehensive software strategies. Here's how the industry is working to limit emissions at all levels of the computing stack:

- Developer-Driven Sustainability: Any cloud services provider now offers carbon-aware APIs for specifying how and where computational tasks are executed (e.g., in regions with low carbon intensity).

- Tracking and Governance: Enterprises are using governance tools (such as Microsoft Purview) and visually tracking and reporting on emissions reductions (using Power BI dashboards) to ensure accountability for emissions reductions.

- Lifecycle Management: In addition, the industry is adopting circular economy practices and ensuring that all servers and Internet of Things devices that are no longer usable are being recycled at their end-of-life.

- Permanent Elimination: For the remaining unavoidable emissions inherent to powering massive workloads, hyperscalers are making unprecedented financial investments in permanent carbon removal technologies, including advanced Direct Air Capture (DAC) facilities.

Preparing for an Intelligent, Autonomous, and Sustainable Cloud Future

The 2030 cloud system provides its highest performance through three components which include complete autonomous operation and widespread deployment of artificial intelligence and strict environmental sustainability requirements. The entire ecosystem functions because of a single fundamental aspect called trust. Organizations need to completely transform their intelligent infrastructure testing processes because they want to use these advanced features in a secure manner.

Securing the Autonomous Cloud Through AI-Driven Testing and Validation

Continuously testing an autonomous, Generative AI driven ecosystem presents a critical paradox. Running bloated, traditional testing pipelines consumes massive compute and energy, which actively defeats the purpose of maintaining an optimized, carbon-neutral cloud.

To solve this, the vital methodology for 2030 is frugal testing. Here is how the industry is adapting to secure the cloud without draining its resources:

- Smart Execution: By leveraging machine learning to intelligently execute only the most high-impact, necessary validations, enterprises can drastically reduce resource drain.

- Strategic Enablement: To achieve this delicate balance without draining resources, Buildnextech serves as the natural enabler for this operational shift.

- Holistic Preservation: By equipping organizations with forward-thinking frugal testing capabilities, Buildnextech ensures that your autonomous cloud architectures remain inherently secure, perfectly carbon-neutral, and financially lean. Ultimately, this approach is key to preserving the delicate efficiency of the modern enterprise.

Conclusion: Thriving in the 2030 Cloud Landscape

As we transition into this next era of computing, organizations have to stop treating infrastructure as a passive utility and start treating it as a strategic asset. To successfully navigate this evolution, enterprises need to align around these core focus areas:

- Fluid Infrastructure: Breaking down centralized silos in favor of a seamless edge computing continuum.

- AI-Native Hardware: Upgrading from standard server racks to purpose-built, specialized supercomputing clusters.

- Intent-Based Autonomy: Empowering systems to self-heal, optimize, and orchestrate themselves to bypass human bottlenecks.

- Autonomous FinOps: Leveraging predictive analytics to ensure your massive compute power remains financially lean.

- Green Computing: Routing workloads dynamically to achieve a truly carbon-neutral footprint.

- Frugal Testing: Securing these self-running systems intelligently, without burning through massive amounts of compute resources.

Ultimately, this approach is key to preserving the delicate efficiency of the modern enterprise. Building a sustainable, AI-driven architecture is a heavy lift, and it certainly doesn't happen overnight. If you are figuring out where to start without draining your compute budget, explore our comprehensive guide on Frugal Testing to see how Buildnextech is helping enterprises secure the cloud of tomorrow, today.

People Also Ask

Q1.How will fully autonomous clouds change enterprise IT operations?

The autonomous cloud system will handle all routine operations which include resource allocation and system expansion and problem resolution without needing human intervention. The system helps your IT staff by removing their need to handle urgent technical issues which lets them dedicate their time to developing new business strategies.

Q2.What is an autonomous cloud and why is it important?

The autonomous cloud system can use machine learning together with continuous feedback loops to perform automatic provisioning and self-repair and system optimization. The system provides complete reliability because human errors do not exist, which makes it suitable for operating essential applications, including autonomous vehicle systems.

Q3.How does AI improve cloud strategy?

Cloud strategy and efficiency are enhanced through artificial intelligence through improvement of cybersecurity threat identification automation of predictive maintenance dynamic allocation of resources. Cloud strategy tends to greatly benefit from immediate analysis of big data by artificial intelligence’s massive computing capability resulting in both improved efficiency and large cost savings.

Q4.How green is the cloud right now, and what is a carbon-neutral cloud?

Today’s datacenters consume quite a bit of energy, however, “carbon aware computing” is used by carbon neutral clouds. This strategy of moving workloads around datacenters where renewable energy powers them offsets or reduces the environmental repercussions created by training very large neural networks.

Q5.Is cloud computing a good career for the future if systems become autonomous?

Yes. Engineers will move toward higher-level governance while routine manual tasks disappear. Architects with the ability to secure autonomous systems, manage AI "blast radiuses," and create intricate hybrid architecture will be in high demand in the industry.

.png)

.webp)

.webp)

.webp)

.webp)