Generative AI has dramatically transformed the software development process and has accelerated the digital transformation journey in various sectors. What started as a proof-of-concept in ChatGPT and GitHub Copilot has now matured into the widespread adoption of full-scale AI systems integrated into enterprise architecture, hybrid cloud infrastructure, and cloud computing systems.

Coding assistants powered by AI are now capable of producing production-quality code, automating software code documentation, assisting in code analysis, and enhancing Developer Productivity. Enterprises are now looking at platforms such as Amazon Q to simplify processes, minimize review cycles, and maximize coding productivity.

However, beneath this surge in productivity lies a new form of technical debt. Unlike traditional tech debt tied to rushed development or legacy systems, this AI-driven debt comes from AI-generated code, shadow AI, autonomous AI agents, and poorly governed pilots. In this blog, we explore its risks and how organizations can manage it effectively.

Understanding Generative AI and Its Foundations

It is important to understand the foundations of Generative AI before analyzing its long-term effects on code quality, governance structures, and enterprise architecture.

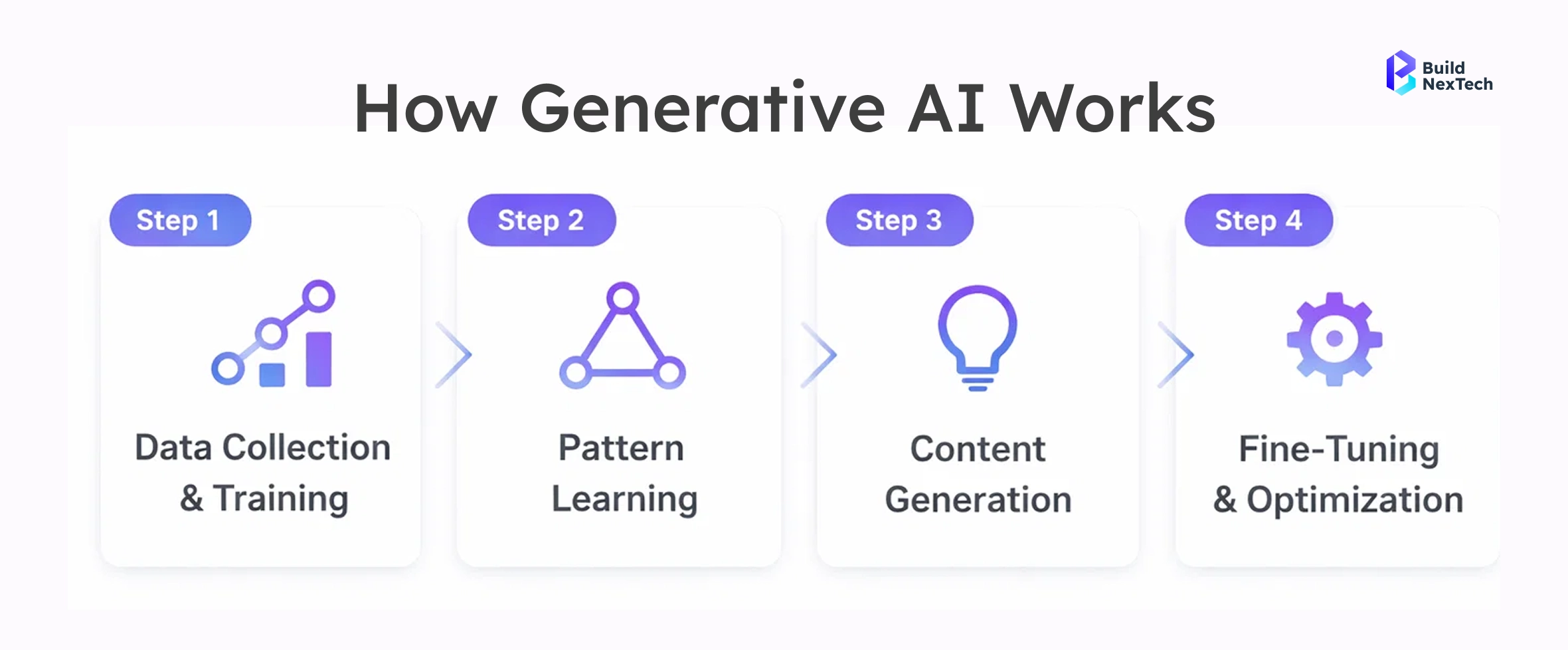

How Generative AI Works

Generative AI works on the principles of Machine Learning and large language models that are trained on massive datasets. The AI models forecast patterns in code, documentation, and natural language by analyzing statistical probabilities. Although the output is likely to be in line with Clean Code principles, these AI models lack a real mental model of architectural validity, legacy systems, and complex enterprise constraints.

When coding assistants powered by AI are used to develop .NET applications, API wrappers, or hybrid cloud infrastructure, the output is likely to be refined and production-ready. However, developers are likely to incur Comprehension Debt and Epistemic debt if they use the code without comprehending the underlying logic. This would lead to a degradation of code health and a rise in the cost of technical debt remediation over time.

Peer review, code reviews, and software engineering discipline are essential to ensure the scalability of software systems.

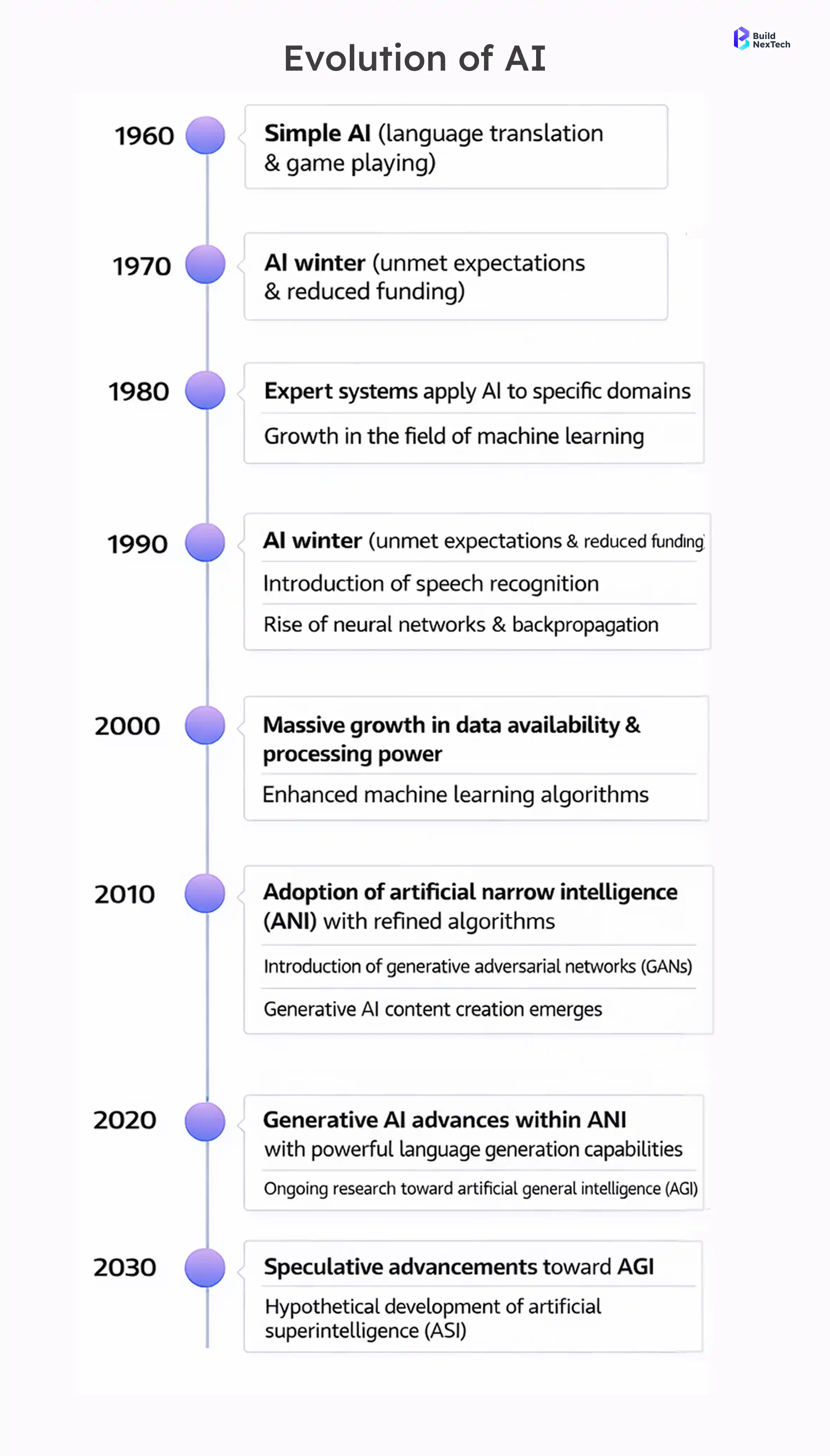

The Rapid Evolution of Generative AI

The quick development of AI models has led to an enhancement of capabilities beyond simple code generation. Companies are adopting the use of Retrieval-Augmented Generation to improve Knowledge Base integration and fine-tuning language models.

Agentic AI and autonomous AI agents are now capable of making semi-independent decisions within enterprise ecosystems. While this innovation enhances employee experience and customer experiences, it also increases governance complexity.

Shadow agents and shadow AI often emerge without centralized oversight, creating security gaps and data governance challenges. Model versions change rapidly, GPU stacks evolve, vendor hardware requirements increase, and cloud capabilities expand. Without interoperable standards and strong governance tools, enterprises risk escalating modernization costs and fragmented enterprise architectures.

Technical Debt in the Age of Generative AI

Technical debt has historically been associated with legacy systems, COBOL code maintenance since the Y2K crisis, and rushed modernization initiatives. Today, Generative AI introduces a new form of tech debt that accumulates faster and spreads wider.

What Is Technical Debt?

Technical debt is the long-term price of short-term trade-offs in software development. Technical debt affects the budgeting of IT resources, the cost of ownership, and technical operations in enterprise architectures.

Technical debt may result from legacy IT systems, undocumented legacy applications, security vulnerabilities, or unfinished digital transformation initiatives. As companies embark on digital transformations to modernize their digital core and incorporate AI tools into cloud computing infrastructure, technical debt is increasingly linked to governance structures and enterprise security concerns.

The financial implications extend beyond initial cost and affect long-term Total Cost of Ownership.

How Generative AI Creates a New Form of Debt

Generative AI accelerates Code Production, but it can also introduce:

- Inconsistent architectural patterns

- Security flaws embedded in AI-generated code

- Poor integration with legacy systems

- Dependency on external LLM API tokens

- Insufficient code analysis and documentation

This contributes to what many describe as the AI Trust Paradox, where increased reliance on AI models creates a widening Trust Gap between developers and C-suite executives. While coding productivity may rise, comprehension depth may decrease.

In environments dominated by Vibe Coding, speed often overrides discipline. Without governance frameworks and structured peer review processes, enterprises risk compromising architectural soundness and increasing long-term remediation costs.

BNXT AI – Generative AI Development & Intelligent Design provides frameworks for architecture validation and secure model deployment.

The Cost of Ignoring AI-Generated Debt

If neglected, AI-generated code can lead to increased SaaS costs, regular Security Patch cycles, and security incidents. Model Drift can cause unpredictable system behavior, particularly in AI systems that are Retrieval-Augmented Generation-based.

Day 2 issues can uncover latent vulnerabilities. Governance costs rise, data security vulnerabilities are exposed, and system failures become probable in hybrid cloud infrastructure environments.

Organizations are expanding their use of AI, and Enterprise Security planning must change to avoid the proliferation of shadow AI and data privacy risks.

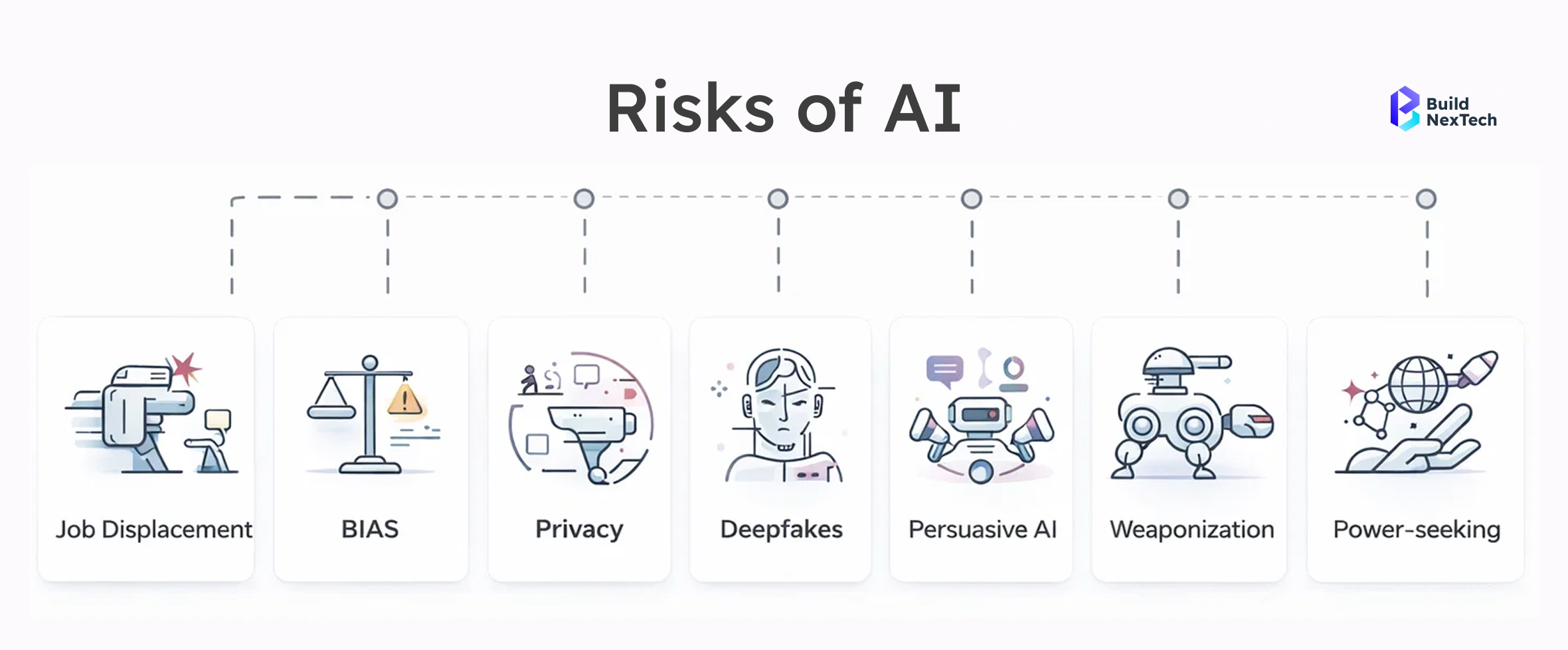

The Hidden Risks of Generative AI

In addition to code quality, Generative AI poses operational and security risks.

Security and Privacy Concerns

The generated code can contain security issues that can evade traditional software security scans. The prompts can lead to the exposure of sensitive information if there are no proper data governance policies in place.

Organizations must evaluate:

- Data privacy implications

- SOC 2 compliance requirements

- AI security posture

- Governance tools for monitoring model versions

- Secure API wrappers and LLM integrations

Security gaps in AI systems can undermine enterprise architectures and increase exposure to security incidents.

Operational and Reliability Risks

The adoption of AI solutions heightens cloud service, GPU stack, and hardware ecosystem dependencies. System breakdowns can happen due to model version changes or unpredictable autonomous AI agents.

If not planned for, the cost of modernization rises, and the cost of ownership goes beyond the initial estimates. Enterprise architectures need to be built for scalable software and reliability.

Workforce and Employment Impact

The use of generative AI is transforming the employee experience and redefining technical work. Although Developer Productivity is enhanced, there is a need for Knowledge Management, documentation, and review time.

The transition to AI-powered coding assistants means that developers need to work more on governance, architecture, and enterprise security.

Advantages vs. Disadvantages of Generative AI

Generative AI offers substantial benefits:

- Accelerated software development

- Enhanced coding productivity

- Faster AI pilots

- Improved software code documentation

- Support for digital transformation initiatives

However, disadvantages must be carefully managed:

- Comprehension Debt and Epistemic debt

- Model Drift

- Security flaws and data security risks

- Governance complexity

- Rising Total Cost of Ownership

- Increased technical debt remediation requirements

The AI Trust Paradox underscores the importance of aligning innovation with structured governance frameworks.

Mitigating the Risks of AI-Generated Technical Debt

Responsible AI adoption necessitates careful governance and architectural planning.

Establish Strong Engineering Practices

Organizations must promote Clean Code principles, code reviews, peer review meetings, and architectural soundness checks before deploying AI-generated code.

Governance structures must cover shadow AI identification, model version management, secure API management, and Enterprise Security integration.

Continuous Learning and Upskilling

Developers and C-level executives must be aware of Machine Learning basics, fine-tuning language models, Retrieval-Augmented Generation techniques, and AI security fundamentals.

Closing the Trust Gap demands a cross-functional understanding of governance structures, IT budget implications, and modernization costs of AI deployments.

Regulatory and Ethical Considerations

AI systems must comply with SOC 2 trust requirements, data privacy regulations, and enterprise-class data governance. Hybrid cloud infrastructure strategies must include AI security and interoperable standards to enable sustainable enterprise architecture.

The Future of Generative AI in Software Engineering

Generative AI will continue shaping software engineering, enterprise architectures, and cloud computing strategies.

Emerging Trends

Emerging developments include:

- Agentic AI systems

- Autonomous AI agents embedded in workflows

- Advanced code analysis tools

- AI Fortress security models

- Integration of Knowledge Base systems with large language models

Preparing for the Next Wave

Enterprises must prioritize technical debt remediation, strengthen governance tools, manage modernization costs, and protect architectural soundness.

Scalable software development in hybrid cloud infrastructure environments requires balancing innovation with disciplined oversight.

Conclusion

Generative AI is transforming the landscape of software development. AI adoption is accelerating rapidly across industries. However, without proper governance and strong data oversight, this increased speed can lead to hidden technical debt.

The need for sustainable innovation is to strike a balance between speed and sound architecture, enterprise security, and cost consciousness.

Avoiding Tomorrow’s Debt

The concept of avoiding tomorrow’s debt is to apply the same level of diligence to AI-generated code as is applied to traditional software development. This includes code reviews, monitoring for model drift, governance tools, and continuous technical debt reduction to avoid security holes and rising Total Cost of Ownership.

Platforms such as buildNexTech provide infrastructure and advisory services to organizations to help them incorporate governance, compliance, and secure AI practices from the outset. This allows teams to mitigate comprehension debt and avoid rework down the line in the software development process.

Building Sustainably with AI

Sustainable AI-based building means integrating AI systems with enterprise architecture, hybrid cloud infrastructure, and scalable software design. By incorporating governance structures, data privacy norms, and enterprise security measures right from the beginning, generative AI can become a strength, not a weakness.

The future is for teams that innovate with confidence and build responsibly.

People Also Ask

1. What is AI-generated technical debt?

AI-generated technical debt refers to the hidden long-term risks created by AI-produced code. This can include security vulnerabilities, poor integration with legacy systems, and challenges in maintaining code quality over time.

2. How does generative AI increase technical debt in software projects?

Generative AI accelerates coding but can introduce inconsistent architecture, undocumented logic, and dependency on external APIs. Without proper oversight, these issues accumulate and increase the cost of remediation.

3. Is AI-generated code safe for production environments?

AI-generated code is not automatically safe for production. It requires thorough reviews, testing, and adherence to governance frameworks to ensure reliability, security, and compliance.

4. How can engineering teams reduce AI-related technical debt?

Teams can reduce AI-related technical debt by implementing Clean Code practices, peer reviews, code audits, and secure AI deployment strategies. Platforms like BNXT AI offer frameworks for governance and compliance.

.png)

.webp)

.webp)

.webp)