Artificial intelligence is transforming enterprises worldwide from Bengaluru-based fintech companies to New York and London global healthcare providers. Organizations face a single trust issue that impacts their return on investment when they scale Generative AI systems because of AI hallucinations. The use of AI assistant systems for customer support and the automation of financial decision-making and the application of computer vision in manufacturing processes all face compliance and security and financial risk challenges because of hallucinated outputs.

Key Topics Covered in This Blog

- What AI hallucinations are in large language models and generative AI systems

- Why hallucinations occur (LLM architecture, data drift, prompt ambiguity)

- How to detect hallucinations using RAG systems, semantic similarity, and confidence scores

- Monitoring in production with observability and drift detection

- Mitigation strategies like prompt engineering and domain-specific fine-tuning

The in-depth guide from Buildnextech demonstrates methods for detecting and preventing AI hallucinations in enterprise production systems while maintaining model accuracy and governance and system reliability.

Understanding AI Hallucinations in Production Systems

Key Aspects of AI Hallucinations in Production:

Definition & Characteristics: Hallucinations are outputs which sound believable but contain incorrect information or entirely invented content. The output problem occurs because ChatGPT and similar models operate by predicting which word to use next instead of verifying which information is accurate.

Common Causes:

- Data Limitations: Insufficient, outdated, or biased training data.

- Model Limitations: The model lacks true reasoning skills and relies on probabilistic pattern matching.

- Prompt Ambiguity: Vague or complex instructions lead the model to produce incorrect results.

Risks in Production:

- Business Liability: Organizations face legal responsibility for false information which includes fake legal cases and incorrect financial data.

- Safety & Trust: Critical systems return wrong information which includes medical test results that lead to dangerous results.

- Operational Failures: Automated systems experience malfunctions which result in substandard service that decreases user confidence.

Understanding hallucinations begins with understanding large language models, LLM architecture, and how Generative AI predicts text probabilistically rather than verifying truth.

Definition and Real-World Manifestations in Deployed Models

What exactly are AI hallucinations? In simple terms, they are confident but incorrect outputs generated by AI models. Many ask, is ChatGPT an LLM? Yes—like Claude ai, gemini ai, perplexity ai, and jasper ai, it is part of the broader family of LLM examples built on transformer-based LLM architecture.

- chatgpt hallucination examples include fake legal citations

- llm hallucination examples include fabricated research papers

- AI Package Hallucination involving hijacked package dependencies

- Hallucinated API endpoints in containerized apps

- False facts in call center automation

The chatgpt hallucination rate varies by task complexity and prompt design. AI hallucination rate metrics differ across model versions, data volume, and sampling techniques. Evaluating hallucinations requires model evaluation frameworks, BERT score, BLEU score, ROUGE score, and factual accuracy scoring.

Classification of Output Distortion and Fabrication Errors

Hallucinations can be categorized into multiple types of ai hallucination. Understanding the types of ai helps in mitigation.

- Intrinsic hallucinations: errors contradicting source documents

- Extrinsic hallucinations: unsupported but plausible claims

- Context window overflow errors due to context window limitations

- Semantic entropy and perplexity analysis indicators

- Token similarity detector mismatches

.webp)

Researchers such as Nikita Kozodoi and Aiham Taleb have studied hallucination detection methods, while leaders like Sam Altman and Kathy Baxter emphasize responsible AI. Claire Cheng highlights enterprise governance frameworks.

Evaluating Hallucination Rates Across Modern AI Models

How do enterprises measure hallucinations? Through model evaluation, human evaluation protocols, and monitoring techniques in production.

- BERT stochastic checker analysis

- Semantic similarity detector comparison

- Retrieval accuracy in RAG systems

- Confidence scores calibration

- A/B testing across model versions

.webp)

Organizations compare LLM vs generative ai performance, especially in domain-specific applications. PaLM 2 Models and other transformer systems show varying AI hallucination rates depending on training data quality and prompt engineering techniques.

Metrics such as model accuracy, factual accuracy, Natural Language Inference validation, and semantic similarity measures enable structured evaluation. Monitoring in Production pipelines should include distributed tracing and monitoring triggers for anomaly detection.

Architectural and Operational Causes of Hallucinations

Hallucinations in AI systems occur when models produce confident, fluent, but factually incorrect or unsupported information. These errors stem from a combination of underlying architectural flaws in how the model is designed and operational, or "in-production," failures in how the system is deployed and managed.

- Transformer probabilistic outputs

- Context window overflow

- Domain misalignment

- Data drift in dynamic environments

- Infrastructure constraints in cloud deployments

Understanding these causes enables enterprises to implement effective validation steps.

.webp)

Transformer-Based Probabilistic Generation Mechanisms

Most modern LLM examples rely on transformer-based LLM architecture that predicts the next token using probability distributions. These sampling techniques, including temperature parameters and greedy algorithm decoding, influence output variability.

- High temperature increases creativity but reduces factual accuracy

- Few-shot learning affects context retention

- Chain-of-thought prompting and chain of thought prompting increase reasoning transparency

- Sampling techniques impact semantic entropy

- Prompt engineering affects instruction clarity

Natural language processing systems predict statistically likely responses. They do not “know” facts. That’s why hallucinations persist across generative AI examples in chatbots, AI assistant tools, and knowledge assistants.

Data Drift, Context Limitations, and Domain Misalignment

Production systems evolve constantly. Data set distributions change, new product variants emerge, and social media conversations shift trends.

- Data drift reduces alignment with training data

- Domain-specific data filters may be missing

- Context window limitations restrict long document processing

- Domain-specific applications require custom data sources

- Data curation impacts performance

Fine-tuning LLMs on domain-specific data reduces hallucination risk. However, improper data curation can introduce new biases. Organizations must ensure high-quality knowledge base integration and semantic similarity validation.

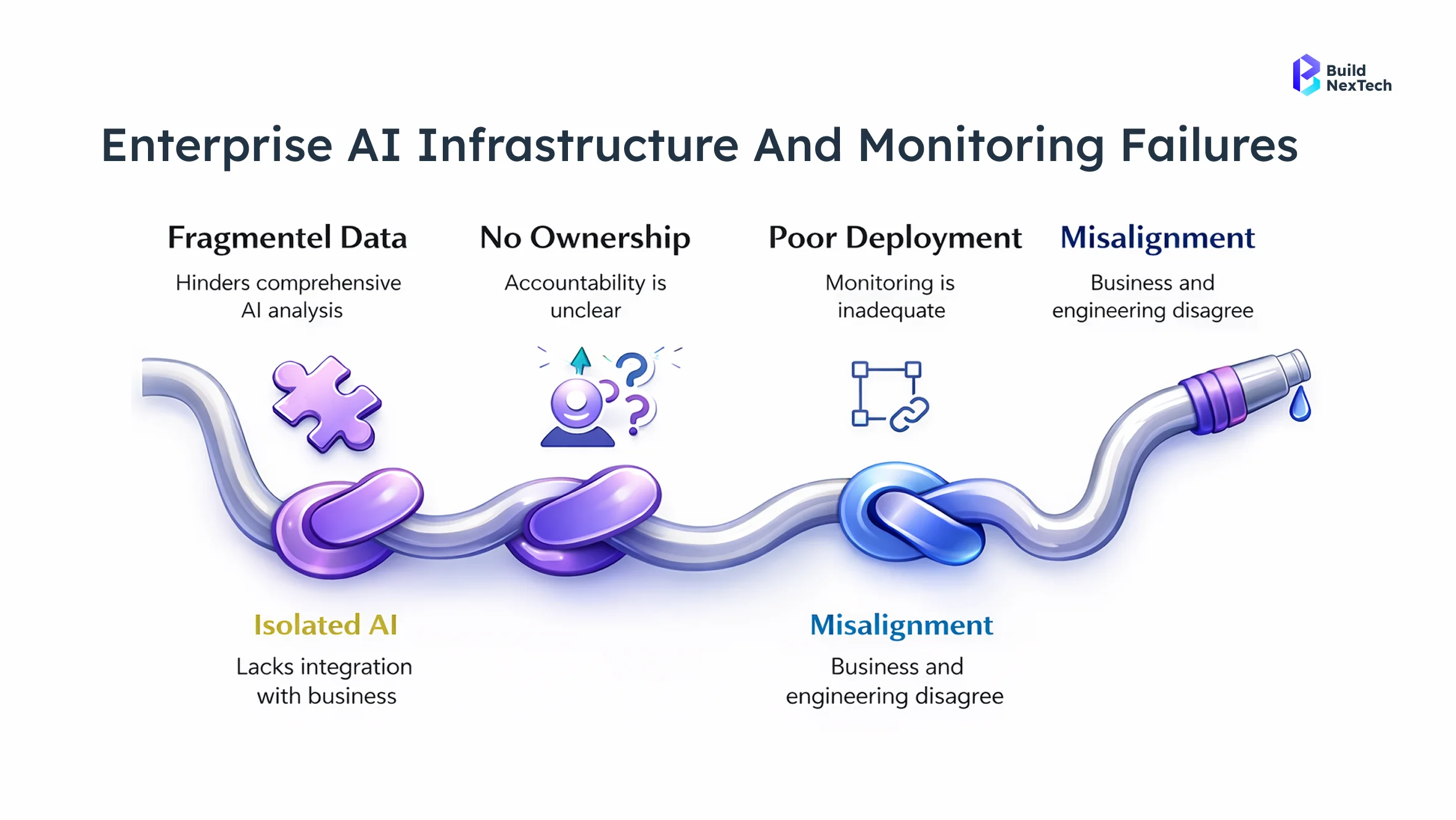

Production Infrastructure Constraints and Failure Triggers

Enterprise AI deployments often run on Google Cloud, Amazon Bedrock, Amazon SageMaker, and object storage services like Amazon Simple Storage Service.

- Latency constraints in containerized apps

- Relational database services integration failures

- Supply chain security vulnerabilities

- Prompt injection attack defenses misconfiguration

- Monitoring prompts misalignment

Security issues such as prompt injection attacks and hijacked package dependencies can lead to AI Package Hallucination. Monitoring triggers and observability platforms like Maxim's observability suite enable detection before large-scale damage.

Detection Frameworks for AI Reliability Monitoring

Detecting hallucinations requires layered monitoring techniques combining statistical, semantic, and retrieval-based approaches.

Key Framework Components & Approaches:

- Two-Layered Monitoring (Agentic AI): Agentic AI uses OOD detection with its dual monitoring system together with transparency features which include Chain-of-Thought to display its internal thinking process while allowing humans to step in.

- Data and Model Drift Detection: The system monitors changes in input data and model outputs to determine the appropriate time for model retraining.

- Continuous Evaluation Frameworks: The system uses three performance metrics which include mean absolute error (MAE) and Fiddler AI's cross-entropy loss and latency tracking to measure its performance.

Popular Tools and Platforms

- Arize AI: The platform provides Machine Learning observability tools which enable users to monitor model performance while detecting data changes and assessing data integrity.

- LangSmith & Langfuse: The platform provides users with tools that enable them to monitor and trace Large Language Models.

- Maxim AI: The platform delivers complete assessment capabilities together with monitoring functions which support operational use.

Buildnextech recommends multi-layer detection pipelines for trustworthy systems.

Confidence Calibration and Uncertainty Quantification Techniques

Confidence scores alone do not guarantee factual accuracy, but calibrated uncertainty helps identify risky outputs.

- Perplexity analysis thresholds

- Semantic entropy measurement

- BERT score comparisons

- Natural Language Inference validation

- Factual accuracy scoring models

Low-confidence responses can trigger human intervention or fallback workflows. In high-risk sectors like medical diagnoses and financial decisions, such guardrails are essential.

Retrieval-Augmented Verification and Knowledge Grounding

Retrieval-Augmented Generation (RAG systems) significantly reduce hallucinations by grounding outputs in source documents.

- Vector databases store embeddings

- Cosine similarity retrieves relevant knowledge base entries

- Retrieval accuracy metrics evaluate performance

- Domain-specific data filters refine context

- LangChain Grounding frameworks ensure traceability

RAG systems retrieve from custom data sources before generation. This approach improves factual claims reliability and reduces LLM hallucinations.

H3-Multimodal Consistency Checks Using Vision-Language Systems

Hallucinations extend beyond text. In computer vision systems, inconsistencies can occur across multimodal pipelines.

- Cross-verifying image captions

- Comparing best ai image generator outputs

- AI image detector validation

- What is computer vision in ai explanation grounding

- Computer vision examples in manufacturing

Applications of computer vision include object detection, quality inspection, and medical imaging. What is computer vision? It enables machines to interpret visual data.

Combining vision-language systems improves semantic similarity measures across modalities, enhancing reliability in generative ai systems deployed in healthcare and retail.

Mitigation Strategies for Production-Grade AI Deployment

Here are the critical mitigation strategies categorized by functional area:

1. Model Performance and Reliability

- Containerization & Orchestration: Use Docker and Kubernetes (e.g., AKS) to package dependencies which enable horizontal scaling and provide consistent performance across different environments.

- Latency Management: The system uses model caching for handled queries and streaming responses which improve user experience and model quantization with distillation to decrease inference time.

2. Generative AI Specific Mitigations (LLMs)

- Input/Output Sanitization & Guardrails: The system uses content filters to block dangerous inputs and outputs while "Prompt Shields" detect injection attacks.

- Data Masking & Privacy: The organization uses data de-identification and anonymization methods together with encryption technology which protects PII (Personally Identifiable Information) during both storage and transmission.

3. Data Privacy and Security

- Continuous Monitoring: The system continuously monitors its essential performance indicators which include latency and throughput and model drift and data drift.

- Automated Retraining: The system establishes pipelines which automatically initiate model retraining when system performance decreases or data distribution undergoes substantial changes.

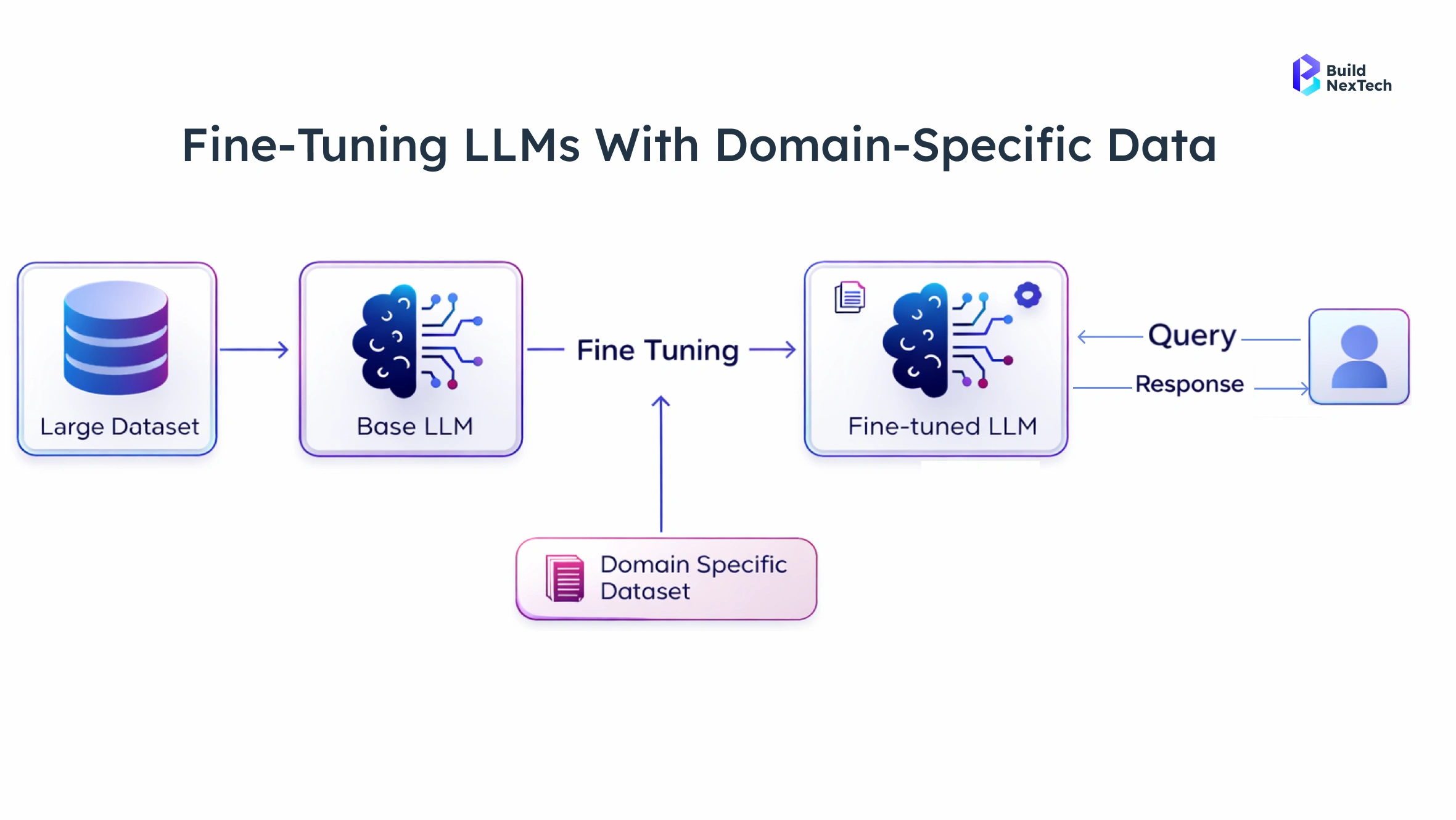

Domain-Specific Fine-Tuning and Data Optimization

Fine-tuning LLMs improves alignment with domain-specific applications such as customer support and call center automation.

- Few-shot learning with curated examples

- Domain-specific data filters

- Data curation best practices

- Custom data sources integration

- Prompt engineering refinement

High-quality training data reduces hallucinations significantly. Domain adaptation ensures accurate product descriptions, correct financial trading insights, and reliable medical diagnoses suggestions.

Real-Time Observability and Drift Detection Pipelines

AI Observability is critical in production systems because generative AI systems evolve continuously under real-world pressure. As user behavior changes, data volume grows, and domain-specific applications expand, even high-performing large language models can experience performance degradation.

- Monitoring in Production dashboards

- Distributed tracing for API calls

- Monitoring triggers for anomaly detection

- User feedback loops

- Model versions tracking

A/B testing helps compare different model versions. Human evaluation protocols supplement automated scoring.

Human-in-the-Loop Oversight and Escalation Protocols

Human oversight remains indispensable, especially in high-risk environments where AI-generated outputs influence medical diagnoses, financial decisions, or compliance reporting.

- Human intervention for low-confidence outputs

- Human evaluation protocols review

- Escalation workflows for compliance risks

- Monitoring prompts adjustments

- Continuous model evaluation

In regulated industries with strict regulatory environments, such as healthcare and finance, oversight prevents regulatory violations.

Explainable AI techniques enhance transparency, especially when dealing with complex natural language processing tasks.

Governance, Risk Management, and Future Resilience

The Effective governance frameworks are essential for sustainable AI systems. They establish accountability, enforce transparency, and define structured risk management processes. These controls must be applied consistently across all stages of AI development and production. As generative AI adoption expands, regulatory expectations continue to increase across industries and regions.

Key Governance Priorities:

- Strengthen compliance with evolving global regulations across healthcare, fintech, and other regulated sectors.

- Document validation processes, monitoring procedures, and human oversight mechanisms.

- Enforce ethical standards and legal obligations throughout the AI lifecycle.

- Integrate governance checkpoints into deployment and production workflows.

In modern production environments, governance must be treated as a core operational function. Organizations that embed governance into their AI strategy build trustworthy systems while reducing financial, compliance, and reputational risks.

Compliance, Auditability, and Responsible AI Standards

Enterprises must align AI deployments with global regulatory environments such as GDPR in Europe, HIPAA in healthcare, and financial compliance frameworks in banking sectors. Responsible AI standards demand traceability of every decision made by large language models and other AI models.

- Audit trails for knowledge base access

- Documentation of validation steps

- Responsible AI reporting

- Monitoring techniques documentation

- Prompt injection attack defenses validation

Organizations referencing best practices from industry leaders like Sam Altman and Kathy Baxter focus on trustworthy systems.

H3-Ethical Implications of Synthetic Output Failures

Hallucinations raise serious ethical concerns, especially when AI tools influence high-impact decisions. While AI assistants and knowledge assistants can enhance efficiency, synthetic output failures can mislead users and create systemic harm.

- Misleading medical diagnoses

- Biased social media conversations analysis

- Incorrect financial decisions guidance

- Misinformation in customer support

- False claims in marketing

Ethical AI requires robust human oversight and domain-specific safeguards. Generative AI systems must prioritize factual accuracy over fluency.

Advancements Toward Hallucination-Resistant Architectures

The future of AI lies in hybrid architectures that combine probabilistic generation with deterministic verification. Instead of relying solely on LLM architecture, next-generation systems integrate layered grounding and retrieval validation.

- Retrieval Augmented Generation integration

- LangChain Grounding pipelines

- Knowledge assistants with source attribution

- Vector databases for scalable retrieval

- Hybrid LLM vs generative ai ensembles

Emerging architectures improve retrieval accuracy and semantic similarity scoring. Platforms like Vertex AI on Google Cloud and Amazon Bedrock provide enterprise-grade safeguards.

As generative AI evolves, AI tools will integrate stronger monitoring techniques and factual accuracy safeguards.

Conclusion : How BNXT.ai Enhances Reliability in AI Production Systems

AI hallucinations exist as permanent structural problems which affect large language models and generative AI systems. The absence of active monitoring will lead to financial losses and compliance violations and damage the company's reputation.

Key Learnings from This Blog

- AI hallucinations stem from probabilistic LLM architecture, data drift, and prompt ambiguity.

- Hallucination detection requires layered approaches—semantic similarity scoring, confidence calibration, perplexity analysis, and RAG systems.

- Monitoring in Production with distributed tracing and drift detection is essential for long-term stability.

- Domain-specific fine-tuning and structured prompt engineering significantly reduce hallucination risk.

At BuildnexTech, our BNXT.ai platform integrates RAG systems, semantic similarity detectors, confidence scores calibration, distributed tracing, and monitoring in production pipelines to deliver trustworthy systems.

Whether you deploy AI assistants, computer vision applications, or enterprise-grade knowledge assistants, BNXT.ai ensures factual accuracy, regulatory compliance, and scalable reliability across global production systems.

People Also Ask

1) Why do large language models hallucinate in real-world deployments?

Large language models rely on probabilistic token prediction within LLM architecture. Without grounding in a knowledge base or source documents, they generate statistically plausible but incorrect outputs.

2) How can AI hallucinations impact enterprise production environments?

Hallucinations can cause regulatory violations, incorrect financial decisions, flawed medical diagnoses, and reputational harm.

3) What are the common signs of hallucinated outputs in AI systems?

Unsupported factual claims ,Low semantic similarity with source documents, High semantic entropy ,Low retrieval accuracy in RAG systems, Inconsistent confidence scores.

4) How can organizations detect hallucinations in deployed AI models?

Organizations use BERT score, BLEU score, ROUGE score, Natural Language Inference validation, perplexity analysis, and AI Observability dashboards.

.png)

.webp)

.webp)

.webp)

.webp)