Computer vision has moved from research novelty to production infrastructure in under a decade. Image recognition, object detection, autonomous systems, and generative AI models are now standard requirements across industries and the framework powering those models determines how fast teams can build, iterate, and ship.

PyTorch and TensorFlow are the two frameworks doing the most of that work in 2025. Both support GPU acceleration, deep neural networks, and the full computer vision pipeline. But they make different trade-offs PyTorch optimises for research speed and flexibility, TensorFlow for production scalability and deployment.

This article will give an overview of PyTorch and TensorFlow. It has examples about installation and practical tips. It shows performance comparisons and machine learning pipelines. It covers computer vision applications and how to deploy them in production.

What this article covers:

- what computer vision is and how modern deep learning pipelines are structured

- How PyTorch and TensorFlow differ in computation graphs, training speed, and deployment tooling

- When to choose PyTorch and when TensorFlow is the stronger call

- Installation commands for CPU, GPU (CUDA), and mobile (TFLite) environments

- A side-by-side comparison table covering all major decision factors

Understanding Computer Vision

What is Computer Vision?

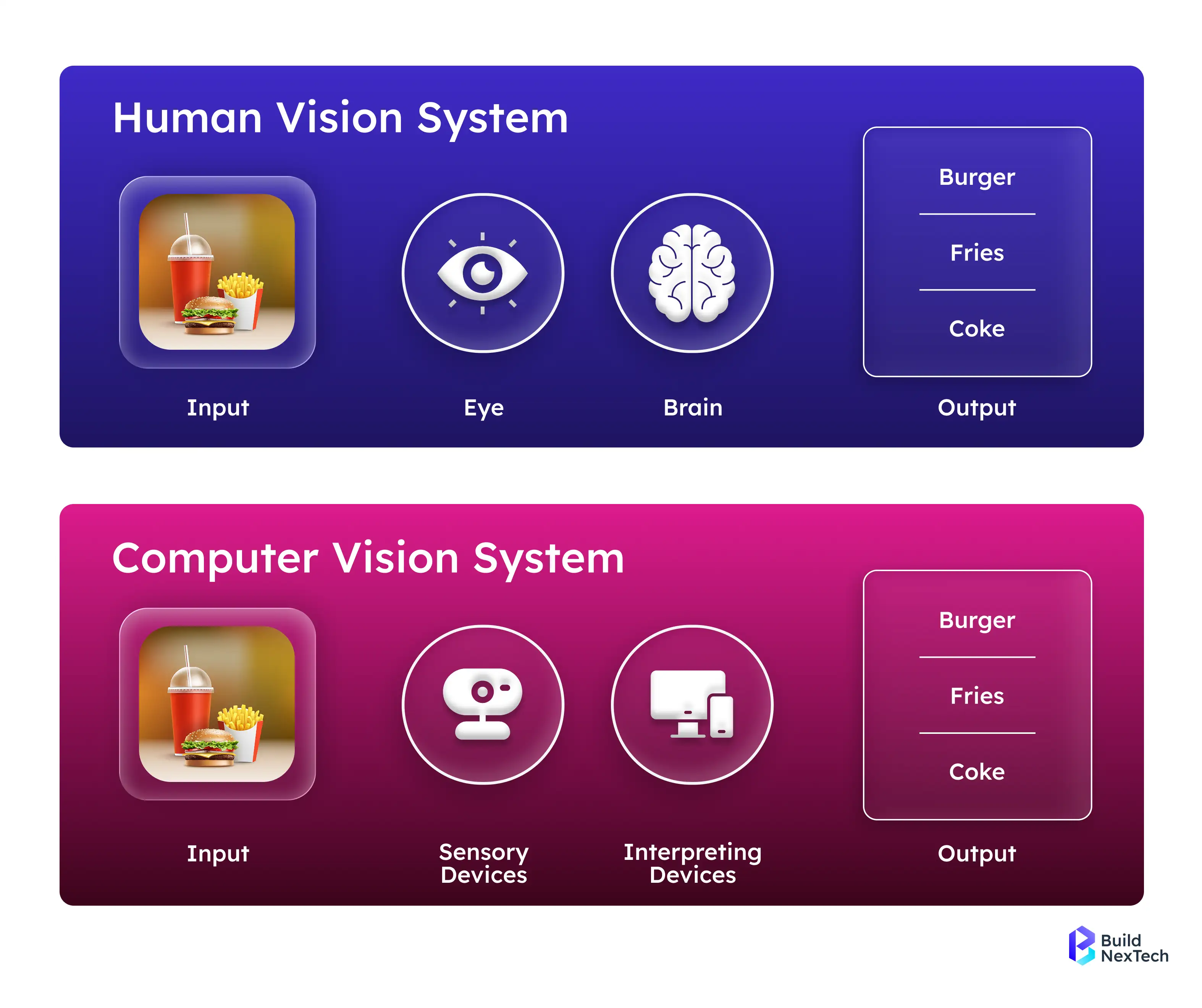

Computer vision is a field of artificial intelligence that allows machines to perceive and interpret visual information from the world. Computer vision helps machines recognise stimuli and classify objects. It also helps machines find important information in images and videos. These processes work in a way that mimics how humans see. At the time, computer vision mostly used rule-based algorithms and feature extraction techniques. Today, deep learning models, neural networks, and GPU acceleration help machines. They reach superhuman accuracy in object tracking, image classification, and video analysis.

What is the Process of Computer Vision?

Current practices in computer vision and computer vision deep learning are very heavily reliant on deep learning (CNNs and deep neural networks specifically). The general workflow involves:

- DataSet: Datasets such as ImageNet, CIFAR, and Imagenette are popular datasets for training models. The nice thing is that there are some libraries, such as OpenCV and various Keras preprocessing layers that can help pre-process those images for a neural network.

- Neural Network Architecture: This is the step of designing our own Artificial Neural Networks, the model can also build off pretrained models like ResNet50, InceptionV3, YOLOv8, Faster R-CNN or Mask R-CNN for a specific goal.

Training and optimisation use optimisers like Adam. Data augmentation happens through Batch Normalisation. Distributed training on GPUs or TPUs improves GPU use. These are common methods.

Deployment: Integrate models into cloud-based platforms such as Google Cloud, deploying models to production with either Docker, FastAPI, TorchServe, or TensorFlow Serving.

Techniques such as PyTorch's Eager Execution or Dynamic Computational Graph allow us to do more both in terms of using our models and operating much quickly. TensorFlow offers Static Computational Graphs for similar reasons.

Most Popular Computer Vision Applications You Should Know

- Healthcare: AI-enabled diagnostics that help identify anomalies in X-rays or MRIs with its use of object detection models.

- Automotive: Autonomous driving applications rely on the need for image recognition and object detection.

- Retail: Inventory tracking and assessing consumer behaviour, as well as automating checkouts. Facial recognition models and surveillance systems analyse data in real time. They help with situational awareness and real-time monitoring.

According to Fortune Business Insights, the computer vision market will grow tremendously into a $17 billion industry. AI-optimised solutions will become more commonplace in operational work.

An Introduction to PyTorch

What is PyTorch? How It Powers Computer Vision and NLP

PyTorch is an open-source deep learning framework developed by Meta AI, formerly known as Facebook AI Research Lab (FAIR). It is one of the most widely used frameworks in research. It is popular due to its design and features such as Dynamic Computation Graphs, Eager Execution, and native Python programming language compatibility. Its key features include:

- Dynamic Computational Graphs: Models can change based on the operations when training.

- AutoGrad: Simplicity in computing gradients of deep learning models.

- GPU Support (CUDA): Fast computation for deep neural networks.

- PyTorch Lightning: A higher-level interface for scalable distributed computing, building, and training.

There are also several high-level packages available: TorchVision, TorchText, TorchElastic, and TorchServe for computer vision, natural language processing, distributed training, and deploying to production respectively.

Installing PyTorch for development can be done using pip or conda - either CPU only or GPU accelerated (CUDA).

- CPU only install

- pip install torch torchvision torchaudio

- pip install torch torchvision torchaudio

- GPU (CUDA) install or PyTorch CUDA

- pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

- pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

- PyTorch Lightning provides an easier way to build and train models.

- pip install pytorch-lightning

PyTorch has powered many state-of-the-art computer vision and AI projects — from YOLOv7 for real-time object detection to Generative Adversarial Networks (GANs) used in Generative AI applications. Major companies like Tesla, Meta (Facebook AI Research), and OpenAI have leveraged PyTorch to build deep learning models for autonomous systems, image synthesis, and large-scale AI experiments. This makes PyTorch a preferred choice for organizations and Python development agencies seeking to build advanced computer vision systems.

With strong community support and thousands of active PyTorch GitHub repositories, developers have access to a rich ecosystem of pretrained models and reusable scripts. For instance, engineers can quickly implement architectures such as ResNet or MobileNet on datasets like MNIST, CIFAR-10, or ImageNet to create image recognition pipelines. Thanks to seamless integrations with Hugging Face, ONNX, and TorchScript, these models can be easily deployed across AWS, Azure, or Google Cloud, ensuring smooth transitions from research to production.

At Bnxt.ai, our AI specialists harness the power of PyTorch to design scalable computer vision solutions — from intelligent image classification systems to Generative AI models that drive real business impact.

An Overview of TensorFlow

What is TensorFlow? How It Powers Machine Learning and Computer Vision

TensorFlow is an open-source deep learning framework created by the Google Brain team. It is well regarded for use in machine learning contexts, computer vision tasks, natural language processing problems, and for the use of simple reinforcement policy models. It implements Static Computational Graphs and Eager Execution, allowing for a flexible interface for both researchers and production applications.

Some of the key features of TensorFlow are:

- TensorBoard: Provides visualisation for model metrics statistics, computational graphs, and performance tracking.

- TensorFlow Lite (TFLite): TensorFlow models optimised for mobile and embedded devices.

- TensorFlow Serving: Efficient deployment of models in production situations.

- GPU and TPU Acceleration: Increases the speed of deep learning model training.

Keras: A high level TensorFlow API designed to build Neural Networks rapidly. - TensorFlow Hub & Estimators: Pre- trained models and straightforward model building, these can significantly speed up model creation.

Installing TensorFlow:

- Standard installation

- pip install tensorflow

- GPU support (TensorFlow GPU)

- pip install tensorflow-gpu

- For mobile deployment using TensorFlow Lite:

- pip install tflite-runtime

TensorFlow in Practice

TensorFlow has powered many computer vision systems, including YOLOv8, Mask R-CNN, and MobileNet models for image recognition. Researchers at Google Brain and community collaborators on arXivLabs, CVPR, and NeurIPS frequently publish advancements using TensorFlow.

With TensorFlow.js, models can also run in JavaScript environments, and Google Cloud integration enables large-scale distributed computing.

PyTorch vs TensorFlow: A Comparative Analysis

Analyzing Performance Metrics in PyTorch and TensorFlow

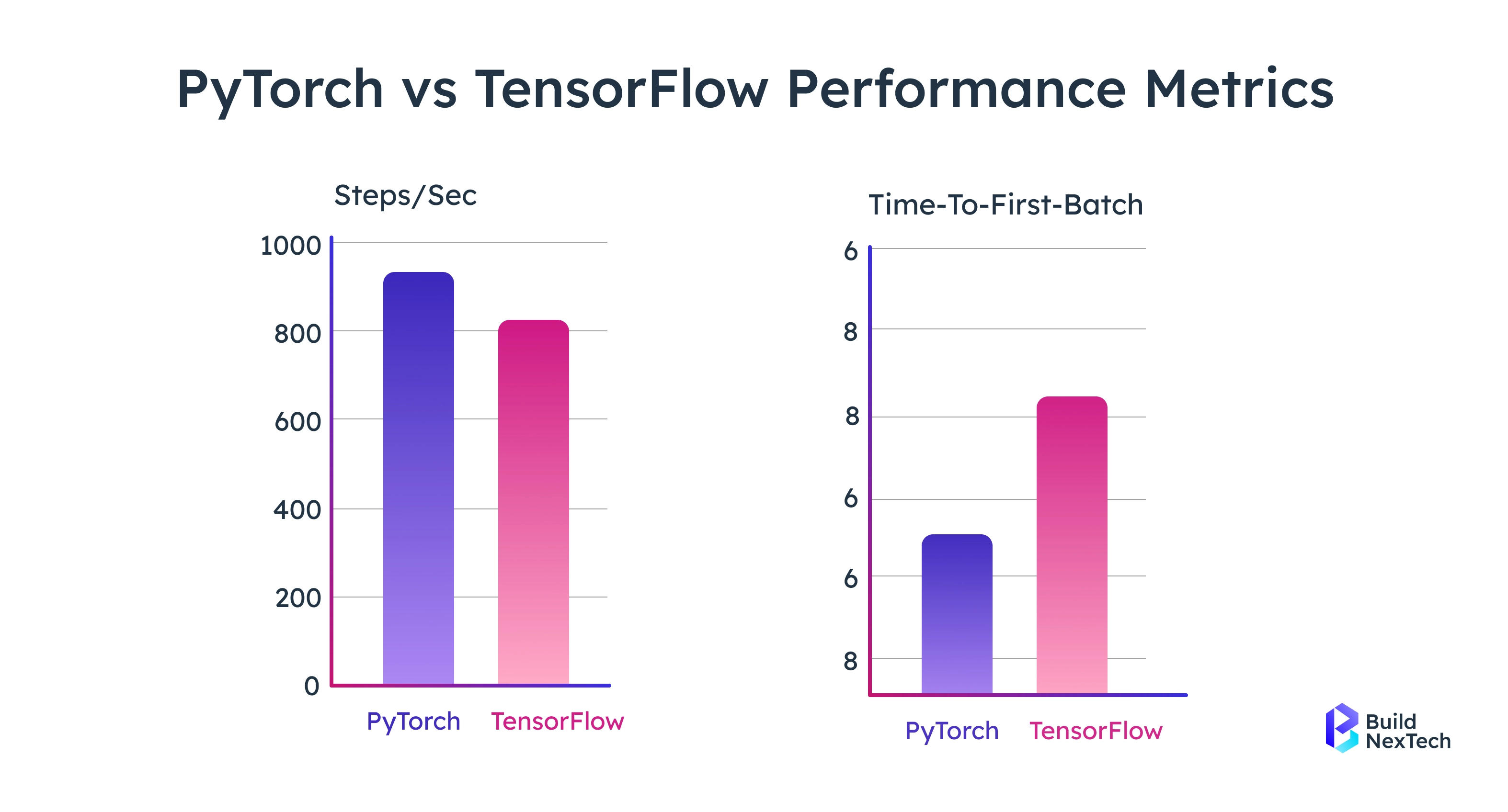

When comparing PyTorch and TensorFlow, performance can vary significantly depending on the hardware configuration, dataset size, and type of deep learning task being executed. Both frameworks are optimized for large-scale AI workloads but excel in different areas research, experimentation, and production deployment. Some of them are:

- Speed of Training: Both frameworks utilize GPU acceleration, however, when paired with CUDA, the time between iterations may be shorter in PyTorch 2.0 vs TensorFlow when constructing deep learning models that are meant to be utilized for research purposes.

- Computational graphs: TensorFlow uses Static Computational Graphs. This allows optimised model deployment with tools like TensorRT. PyTorch uses Dynamic Computational Graphs. This gives more flexibility for complex models.

- Accuracy and Efficiency: Benchmarking studies on datasets like ImageNet and CIFAR-10 show minimal accuracy differences between PyTorch and TensorFlow. PyTorch excels in rapid prototyping and research, while TensorFlow leads in scalability and production-grade deployment.

- Real-world Example: Meta AI uses PyTorch for models like Segment Anything and DINOv2, whereas Google relies on TensorFlow for TPU-powered systems such as Google Photos and YouTube recommendations.

Many studies have compared the accuracy of each framework using common datasets like ImageNet, CIFAR, and Imagenette. These studies show the accuracy differences are very small. However, utilising PyTorch has proven much more efficient when it comes to rapid model prototyping.

Community Support in both frameworks:

- PyTorch: PyTorch is very approachable for beginners and the simplest framework to learn - this is primarily due to its Pythonic syntax, AutoGrad, and available tutorials. Through PyTorch Hub, HuggingFace, and various collaborations with NeurIPS/CVPR, PyTorch has also garnered a considerable amount of support from the community.

- TensorFlow: TensorFlow tends to have a somewhat steeper learning curve, but it offers you TensorBoard, Keras preprocessing layers, and TensorFlow Hub. TensorFlow now has far more industry adoption and multiple enterprise support options from Google.

Ideal Use Cases

When deciding which framework to use, the right choice depends largely on your project goals, deployment needs, and team expertise.

When using either framework, PyTorch is ideal for:

- Research projects requiring fast experimentation.

- More difficult Generative AI, either through autoencoders or GANs.

- Reinforcement learning and dynamic flexible models.

When using either framework, TensorFlow is preferable for:

- Production - especially models that require scalability.

- Mobile/embedded environments through TensorFlow Lite (TFLite).

- Integration with GCE and TPUs to run large-scale distributed training.

Expert Tip: Companies like bnxt.ai help teams choose, integrate, and optimize these frameworks bridging the gap between research-grade models and enterprise deployment.

Conclusion

PyTorch and TensorFlow have both matured to the point where either can handle most computer vision workloads the decision comes down to where in the development lifecycle you're operating. PyTorch's dynamic computation graphs and Pythonic syntax make it the faster choice for research, rapid prototyping, and generative AI experiments. TensorFlow's static graph optimization, TensorFlow Lite, and TensorFlow Serving make it the more reliable path when the model needs to run at scale in production or on mobile and edge devices. For most teams, the answer isn't choosing one and ignoring the other it's knowing which framework leads at each stage and being able to move between them. If your organization is building computer vision systems and needs a development partner with hands-on experience across both frameworks, the AI team at BuildNexTech works with PyTorch and TensorFlow to deliver production-ready deep learning solutions. We also offer end-to-end web development services to integrate computer vision models into your product interface, and cloud migration services to deploy those models on AWS, GCP, or Azure at scale.

Incidentally, developers can combine both frameworks (e.g., using frameworks of TorchVision, TorchServe, TensorFlow Serving, Keras, TensorBoard) to build strong AI, deep learning, and Generative AI systems. Want to implement these frameworks for your business? The AI team at Bnxt.ai helps organizations accelerate innovation through advanced deep learning and computer vision development.

People Also Ask

Is PyTorch or TensorFlow better for computer vision in 2025?

It depends on your goal. PyTorch is the dominant choice in academic research and rapid prototyping its dynamic computation graphs make it easier to experiment with novel architectures like GANs, transformers, and custom CNNs. TensorFlow is stronger for production deployments, especially when you need TensorFlow Lite for mobile inference or TensorFlow Serving for scalable API-based model serving. Most enterprise computer vision pipelines in 2025 use PyTorch for development and TensorFlow or ONNX-based tools for production export.

Can PyTorch models be deployed to production reliably?

Yes. PyTorch's production story has improved significantly since the introduction of TorchServe and PyTorch Lightning. TorchServe handles model serving with REST and gRPC APIs; Lightning simplifies multi-GPU and distributed training workflows. For cloud deployment, PyTorch models also export cleanly to ONNX, which runs on TensorRT, AWS SageMaker, and Azure ML. The gap between PyTorch and TensorFlow in production capability has narrowed considerably since PyTorch 2.0.

What is the difference between dynamic and static computation graphs?

PyTorch builds its computation graph dynamically the graph is constructed at runtime as operations execute, which makes debugging intuitive and allows models to change shape based on input. TensorFlow originally used static graphs, which are defined before execution and allow for more aggressive optimization at compile time. TensorFlow now supports eager execution (dynamic mode) by default, but its core deployment tooling — TensorRT, TensorFlow Serving — still benefits from the static graph approach for performance.

What is TensorFlow Lite and when should I use it?

TensorFlow Lite is a lightweight version of TensorFlow designed for inference on mobile devices, embedded systems, and edge hardware. It converts full TensorFlow models into a compressed format (.tflite) optimized for low-latency, low-power environments. Use TFLite when your computer vision model needs to run on Android, iOS, Raspberry Pi, or microcontrollers without a server connection. It supports quantization to further reduce model size, making it practical for real-time on-device image classification and object detection.

Which companies use PyTorch vs TensorFlow in production?

PyTorch is used by Meta (for Segment Anything and LLaMA), Tesla (for Autopilot's vision systems), and OpenAI (for GPT model training). TensorFlow is used by Google (Google Photos, YouTube recommendations, Google Translate), Airbnb (search ranking), and Twitter (content recommendation). The split broadly reflects the research vs. production divide PyTorch dominates in ML research labs and AI startups, TensorFlow in large-scale Google Cloud-integrated enterprise systems.

.webp)

.webp)

.webp)