Modern streaming platforms power billions of viewing hours each month, and Netflix stands at the center of this revolution. Delivering instant, high-quality, buffer-free video requires a powerful combination of cloud-native infrastructure, distributed systems, adaptive bitrate streaming, and global CDNs. What looks like a simple “Play” button actually triggers one of the most advanced engineering pipelines in the world.

At BuildNexTech, we help organizations adopt similar architectures—cloud-first, scalable, and globally optimized—so CTOs and founders can build platforms that perform as reliably as top-tier streaming services. Whether you're building an OTT product, a media platform, or a high-performance backend, the lessons from Netflix’s architecture are invaluable.

Key Insights from This Article

- Distributed systems + CDNs enable global, low-latency playback.

- Adaptive bitrate and caching minimize buffering under varying network conditions.

- Microservices, observability, and automation support continuous innovation.

- BuildNexTech helps businesses modernize using the same cloud principles.

Below is a detailed breakdown of how Netflix’s architecture supports millions of concurrent streams and what leaders can learn from it.

What Happens When You Press Play on Netflix

Most people assume Netflix is a video file sitting on a server somewhere that gets sent to you when you ask for it. The reality is more complicated — and more interesting.

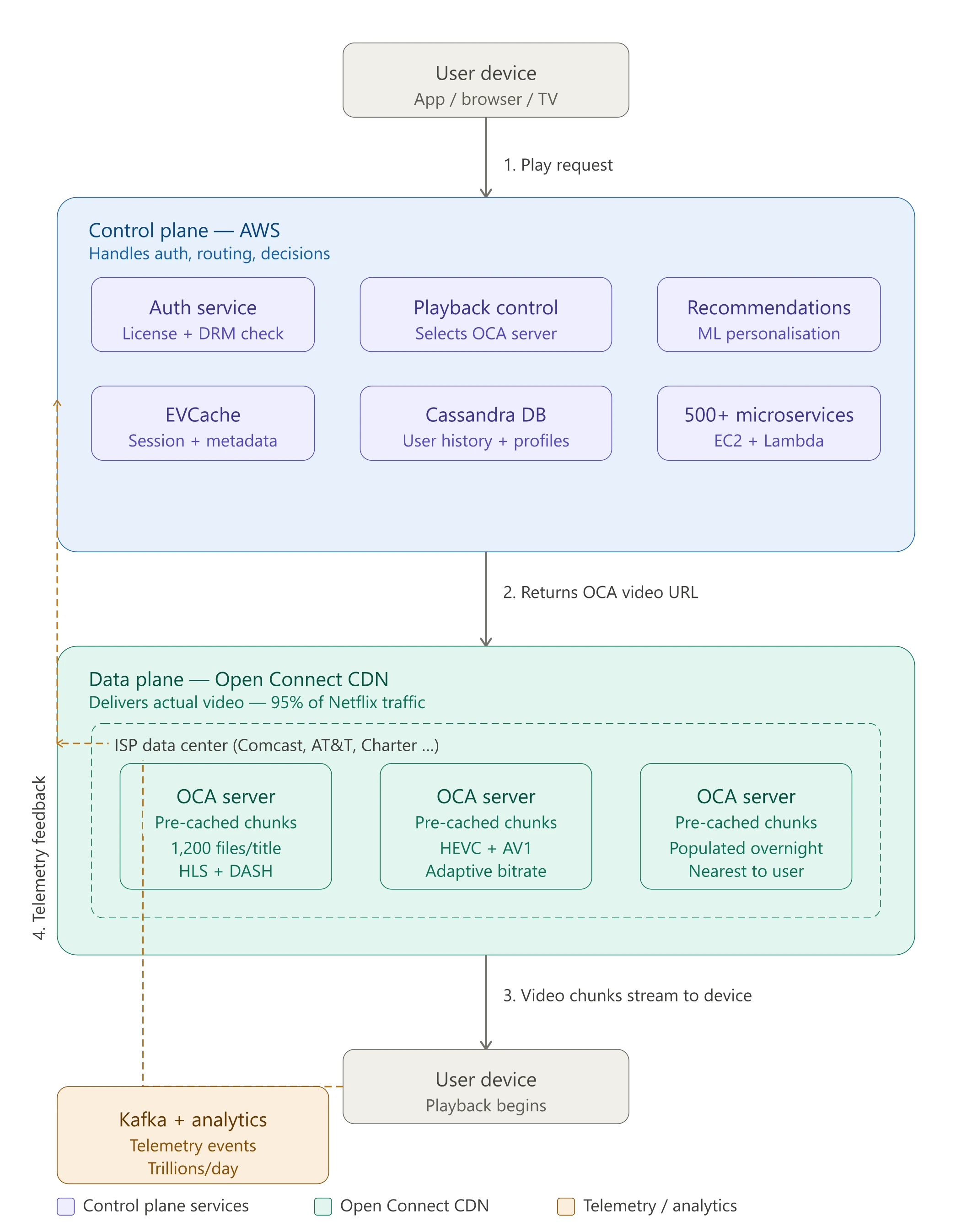

Netflix's architecture has two separate planes that never talk to each other directly. The control plane runs on AWS and handles everything before you start watching: login, search, your recommendations, billing, and the handoff that selects which server your video comes from. The data plane is Open Connect — Netflix's own CDN — which actually delivers the video chunks to your screen.

When you hit play, here's the sequence:

- The Netflix app on your device sends a request to the AWS control plane

- The playback service checks your license, DRM rights, and device capabilities

- It queries the Open Connect steering service to find the best nearby cache server with your content

- You get back a list of chunk URLs pointing to that cache server

- Your device starts fetching video chunks via adaptive bitrate streaming (HLS or DASH)

- Telemetry data flows back continuously so Netflix can adjust quality in real time

That's the skeleton. Each step has significant engineering behind it.

The Numbers That Make Performance Non-Negotiable

Netflix's internal research found that a 1-second improvement in video start time increases the probability a user continues watching by a measurable amount. A buffering event lasting more than 3 seconds causes a significant percentage of viewers to abandon the stream entirely. At 300 million subscribers, even small improvements in these metrics translate directly to retention and revenue.

The scale alone makes performance hard. Netflix handles peak traffic of around 15% of global internet bandwidth during prime-time hours. A single popular show launch — Squid Game season 2, for example — can spike global traffic in ways that would take down most architectures. Netflix runs without downtime through all of it, partly because of engineering and partly because they've practiced failure so often that recovery is automatic.

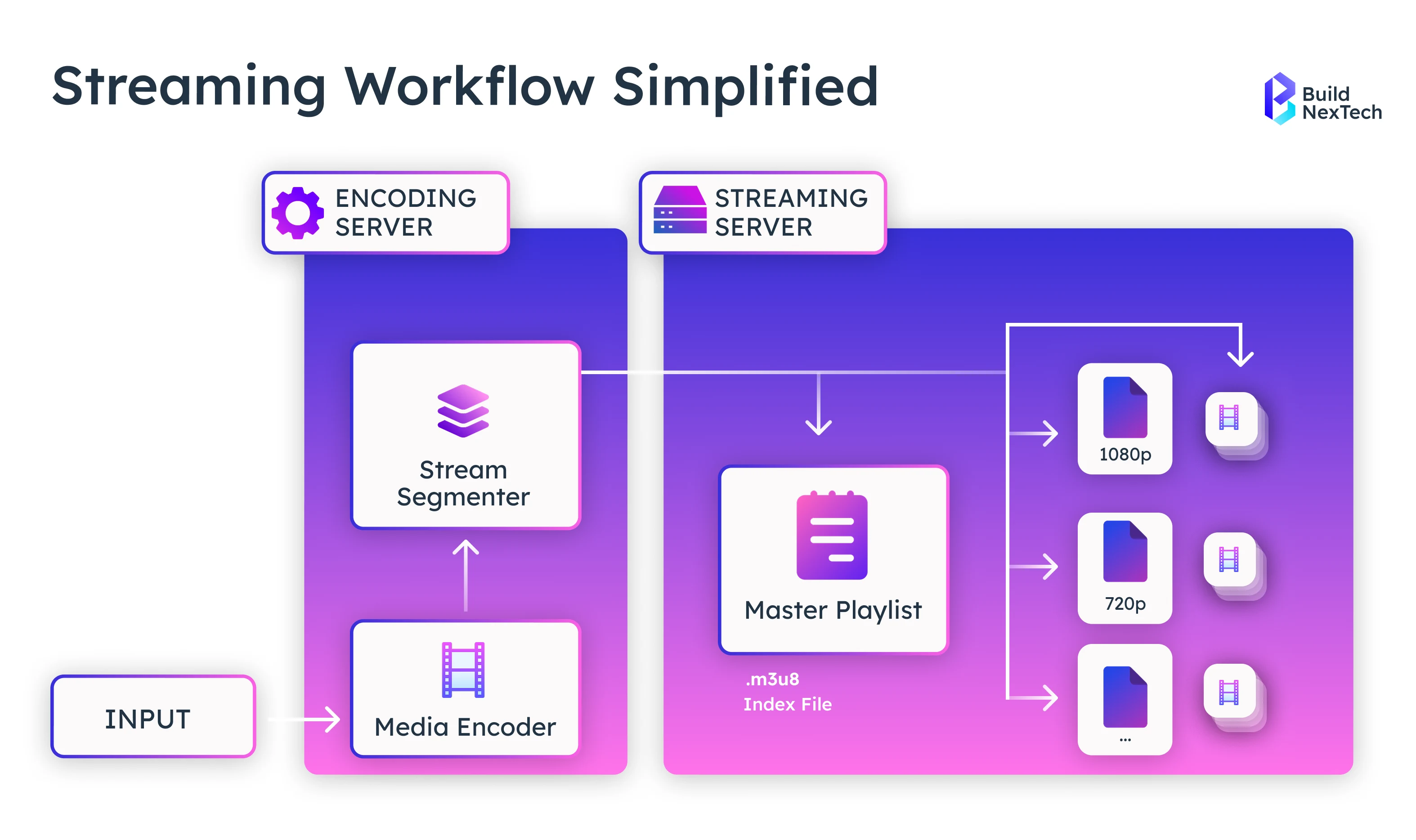

How Adaptive Bitrate Streaming Actually Works

Streaming is not one video file being sent to you. It's a sequence of small chunks — typically 2 to 10 seconds long — fetched one at a time from a CDN server near you. Your device buffers a few chunks ahead while you watch the current one.

The adaptive part is what makes Netflix work on a train with 2 bars of signal. Netflix encodes every title at multiple quality levels — roughly 1,200 files per movie when you count all the resolution, codec, and device variants. Your device constantly monitors your available bandwidth and switches between quality levels mid-stream, sometimes every few seconds.

The two main protocols for this are HLS (HTTP Live Streaming, used by Apple devices) and DASH (Dynamic Adaptive Streaming over HTTP, used on most others). Both break the video into chunks indexed by a manifest file. The player fetches the manifest, then starts requesting chunks at whatever quality level matches your current network conditions.

HEVC (H.265) and the newer AV1 codec give Netflix better compression than the older H.264 — roughly 50% better quality at the same file size. This matters because it reduces the bandwidth Netflix needs to serve 4K content, cutting their CDN costs and making high-quality streaming viable on slower connections.

Global Trends Shaping Streaming Technology

Streaming is evolving every day with new formats, devices, and user expectations contributing to transformation. Streaming platforms are adapting by investing in automation, AI-driven personalization, and highly scalable cloud-native architectures.

Global trends in streaming include:

- Explosion of live streaming and event-based pipelines

- Adoption of AI/ML for recommendations, personalization, and A/B testing

- Transition to serverless and microservices-based architectures

- Growing emphasis on edge computing for ultra-low latency

- Transition to next-gen codecs like AV1 and HEVC

- Utilization of data-driven pipelines to predict viewer behavior

- Growth of OTT platforms in developing markets with varying network conditions

Ultimately, these trends demonstrate that the future will belong to companies that enact automation, resilience, and data intelligence in their operations, and BuildNexTech is here to enable and support digital innovation.

Netflix's Architecture: The Two-Plane Split That Makes It Work

Netflix's architecture is best understood as two completely separate systems that hand off to each other exactly once — when you press play.

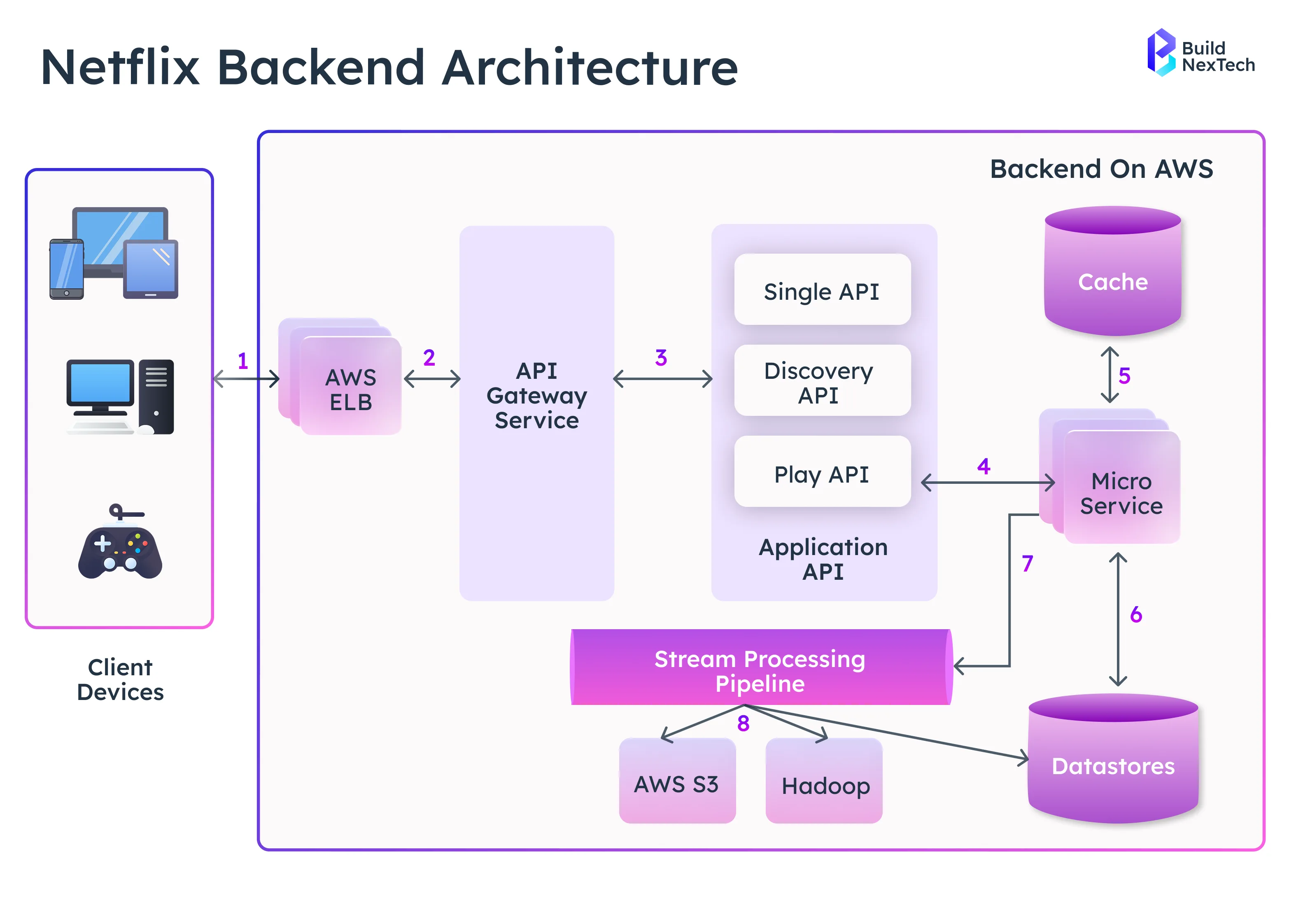

The Control Plane (AWS)Everything that happens before you watch anything runs on AWS. This includes authentication, search, recommendations, user profiles, billing, and the playback control service that decides which Open Connect server should serve your video. Netflix runs 500+ microservices on AWS, mostly on EC2 instances with auto-scaling groups that expand during peak hours.

Databases behind the control plane include:

- Cassandra — distributed NoSQL database storing user watch history and preferences. Netflix contributes heavily to Cassandra's open source development.

- EVCache — Netflix's in-house caching layer built on top of memcached. It handles the fast lookups for session data, content metadata, and user state.

- MySQL/RDS — used for transactional data that needs strict consistency, like billing.

The Data Plane (Open Connect)Once the control plane decides you can watch something and identifies the right server, it hands off to Open Connect — Netflix's own CDN, built specifically for video delivery. Open Connect Appliances (OCAs) are physical servers Netflix places inside ISP data centers, not in Netflix's own data centers. This means the video travels the shortest possible distance from server to screen.

Netflix caches content on OCAs proactively. Popular content gets pushed out to thousands of OCAs overnight, during off-peak hours, so it's ready before users request it. Around 95% of Netflix traffic is served from Open Connect without touching Netflix's AWS infrastructure at all.

Key Components of Cloud-Native Streaming Architecture

A cloud-native architecture allows Netflix to scale elastically, deploy updates seamlessly, and maintain high availability worldwide. BuildNexTech applies the same principles in enterprise-grade cloud modernization initiatives.

Key components include:

- Stateless services running on Amazon EC2 and AWS Lambda

- Auto-scaling groups handling peak traffic during global releases

- Distributed databases like Cassandra and DynamoDB

- Playback control plane for verifying licenses, DRM, and device capabilities

- Open Connect CDN for accelerated content delivery

- API Gateway + GraphQL for structured, efficient client interaction

- Content Management systems orchestrating metadata, formats, and operations

This model empowers Netflix to experiment continuously, improve performance, and scale without downtime—an engineering strategy leaders can adopt to future-proof their platforms.

Cloud Security Architecture for Streaming Platforms

Security is critical for protecting content, user data, and digital rights. Netflix uses multi-layered cloud security integrated deeply into its content pipeline.

Key elements of secure streaming include

- Digital Rights Management (DRM): Preventing unauthorized playback

- Zero-trust authentication for APIs

- Encryption of video chunks & metadata during transit

- Isolated VPCs and subnets for content operations teams

- Threat detection using AWS-native tools

- Continuous delivery with secure pipelines like Spinnaker

- A/B testing & machine learning to identify anomalies

By integrating security throughout the cloud-native workflow, Netflix ensures content integrity while delivering billions of high-quality playbacks each month.

Why Netflix Left Its Own Data Centers (And What Happened When It Did)

In 2008, a database corruption issue took Netflix's DVD shipping operation offline for three days. That outage was the turning point. Netflix decided to move everything off its own hardware and onto AWS — and to never again have a single database that could take everything down with it.

The migration took 8 years. By January 2016, Netflix had shut down its last owned data center. The shift wasn't just technical — it required rebuilding the entire application as microservices, moving from relational databases to distributed NoSQL systems, and retraining engineering teams around a completely different operational model.

The payoff was real. Netflix can now scale to handle a global season premiere — 10x normal traffic — by having AWS auto-scaling groups spin up new EC2 instances automatically. Failures in one AWS region can be absorbed by redirecting traffic to other regions. No single component going down takes the service with it.

Distributed Systems That Power Netflix’s Global Scalability

Distributed systems allow Netflix to handle millions of simultaneous users and deliver stable video playback. Instead of centralizing workloads, Netflix uses cloud regions, edge servers, and OCAs to distribute traffic.

Distributed systems reduce failures, increase performance, and support global audiences.

What Are Distributed Systems and Why They Matter

Distributed systems combine multiple computers into a coordinated architecture. They ensure high reliability and performance even when individual components fail.

Benefits include:

- High availability

- Low latency

- Horizontal scalability

- Better fault tolerance

- Efficient global operations

For fast-growing businesses, distributed systems create predictable performance under heavy load.

Types of Distributed Systems Used by Netflix

Netflix uses specialized distributed systems throughout its pipeline.

Types include:

- Distributed Storage Systems for movies, shows, subtitles, artwork, and metadata

- Globally replicated user-data systems for watch history, preferences, and personalization

- Event-driven streaming systems for playback logs, analytics, and real-time recommendations

- Global edge delivery systems (CDNs + OCAs) that bring content closer to user

- Distributed cache layers for quick fetches of profiles, sessions, device data

- Service mesh and control-plane systems that manage routing, discovery, and resiliency across microservices

Each layer enhances performance and reliability.

How Distributed Architecture Ensures Low Latency Worldwide

Low latency is essential for buffer-free playback. Netflix achieves this by placing content near users and using intelligent routing.

How Netflix minimizes latency:

- Local caching via OCAs

- Regional edge nodes

- Chunk-based delivery

- Adaptive bitrate selection

- Open Connect Backbone

- Predictive caching

By bringing content closer to the viewer, buffering becomes almost nonexistent.

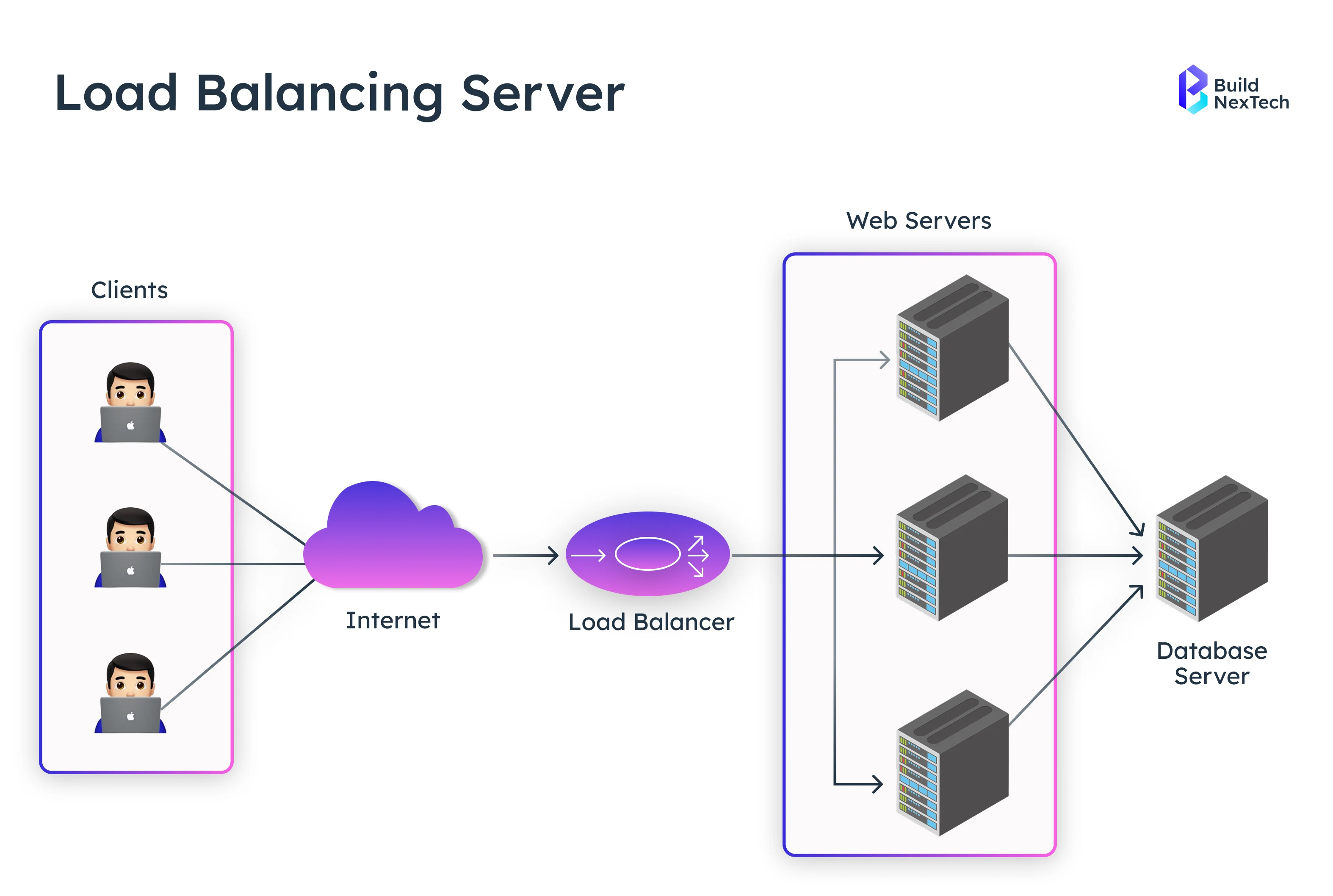

Load Balancing Techniques That Keep Netflix Running Smoothly

Load balancing ensures Netflix can handle massive global traffic without overloading servers. Millions of requests flow through distributed systems, requiring precise routing to keep playback smooth.

Netflix’s load balancing layers:

- Client-side load balancing

- AWS Elastic Load Balancers

- Service discovery via Eureka

- Fault isolation with Hystrix

- Geo routing for global distribution

- Playback-specific routing logic

This multi-layer approach ensures uninterrupted viewing during peak traffic.

What Load Balancing Means in Cloud Streaming

Load balancing distributes traffic across servers to prevent overload. For streaming, this covers metadata requests, playback sessions, and video delivery.

Key mechanisms:

- Service discovery

- Dynamic routing

- Health checks

- Failover logic

- Latency-based routing

Proper balancing ensures users receive fast, consistent playback.

Modern Load Balancing Methods Used by Netflix

Netflix uses advanced load balancing to route billions of daily requests.

Key methods:

- Client-side routing in device apps

- Eureka for service discovery

- Hystrix for fault tolerance

- AWS ELB/ALB/NLB

- Geo-load balancing

- Red/black deployments with Spinnaker

These techniques keep the platform stable during global surges.

How Load Balancing Reduces Buffering and Lag

Good load balancing prevents congestion by sending requests to optimal servers.

How buffering is reduced:

- Smart routing based on server health and latency

- Dynamic distribution of peak traffic across multiple clusters

- Regional redirects to the nearest optimal edge location

- Automatic shifting of traffic away from overloaded or failing nodes

- Continuous monitoring to detect and resolve congestion early

This keeps stream quality stable, even when millions of users join at the same time.

Microservices Architecture That Powers Netflix’s Speed

Microservices allow Netflix to move fast, deploy independently, and scale each service based on demand. This modular approach accelerates innovation and reduces risk.

Microservices advantages:

- Independent deployments

- Fault isolation

- Faster updates

- Global scalability

- Observability & monitoring

- DevOps-friendly workflows

This architecture supports rapid evolution of the platform.

How Netflix's Microservices Work in Practice

Netflix runs 500+ independent microservices. Each owns a single function — authentication, recommendations, search, playback control, billing, and so on. Services communicate via APIs and an internal event bus.

The design patterns Netflix relies on most:

Circuit Breaker (Hystrix) — If a downstream service starts failing, Hystrix stops sending requests to it and returns a cached or default response instead. This prevents one failing service from causing a cascade that takes everything else down. When the recommendation service goes down, you still get a home page — just with less-personalized content.

Bulkhead Isolation — Traffic for different feature sets is isolated so that a spike in search queries doesn't steal resources from the playback service.

Saga Pattern — For multi-step operations that span several services (like starting a new subscription), Netflix uses sagas to coordinate the sequence and handle rollbacks if something fails partway through.

Event-Driven Architecture (Kafka) — Most inter-service communication for non-critical paths happens through Kafka event streams, not direct API calls. This decouples services and lets each one process at its own pace. Netflix ingests trillions of events per day through Kafka — playback telemetry, viewing history, A/B test results, and more.

Containerization and Orchestration Behind Netflix’s Infrastructure

Containers help Netflix deploy microservices consistently and efficiently across environments. Orchestration ensures seamless scaling and self-healing.

Containerization foundations:

- Lightweight, isolated services

- Fast rollouts and rollbacks

- Immutable deployments

- Cloud-native automation

- Platform-wide reliability

Containerization keeps Netflix’s infrastructure agile and predictable.

How Containers Improve Resilience in Streaming Platforms

Containers enable rapid scaling, predictable performance, and fault isolation.

Resilience benefits:

- Faster startup

- Lower resource usage

- Easy rollback

- Horizontal scaling

- Better resource management

- Isolated environments

This helps Netflix keep services healthy under global load.

Kubernetes vs Docker for Large-Scale Workloads

Docker packages applications, while Kubernetes orchestrates them.

Docker strengths:

- Simple container packaging

- Developer-friendly

- Lightweight runtime

Kubernetes strengths:

- Advanced orchestration

- Autoscaling

- Service discovery

- Self-healing

- RBAC and secret management

At scale, Kubernetes-like orchestration becomes essential.

Security Best Practices for Docker-Based Deployments

Docker Container security is essential for DRM-protected video and user data.

Best practices include:

- Vulnerability scanning

- Least privilege policies

- Secrets management

- Network segmentation

- Runtime monitoring

- Immutable deployments

These ensure strong security across streaming pipelines.

Open Connect: Why Netflix Built Its Own CDN

Most companies rent CDN capacity from Akamai or Cloudflare. Netflix built their own — Open Connect — because at their scale, renting was more expensive and less controllable than owning.

Open Connect works differently from a traditional CDN. Instead of placing servers in Netflix-owned data centers and routing traffic to them, Netflix physically installs Open Connect Appliances (OCAs) inside ISP data centers. Comcast, Charter, AT&T, and hundreds of other ISPs around the world host Netflix servers inside their own buildings.

This matters for two reasons. First, latency: a video chunk traveling from a server inside your ISP's local data center takes milliseconds, not the hundreds of milliseconds it would take to cross the internet backbone to a distant AWS region. Second, cost: ISPs benefit from hosting OCAs because it reduces the traffic they need to move across expensive inter-network connections. Netflix benefits because they get free hosting inside ISPs in exchange for reducing that ISP's transit costs.

Netflix pre-populates OCAs with content during off-peak hours. Overnight, Netflix's routing system determines which movies and shows are likely to be popular in each region the next day and pushes those files to the relevant OCAs. When you hit play, 95% of the time your video is already sitting on a server inside your ISP, waiting.

How CDN Edge Servers Deliver Content Faster

CDN edge servers bring content physically closer to users.

Faster delivery through:

- Local content storage

- Reduced hops

- Regional content optimization

- Lower ISP bandwidth usage

- High-speed chunk delivery

This dramatically improves start times and reduces buffering.

Multi-Layer Caching Strategies Used by Netflix

Netflix uses multiple caching layers to minimize latency.

Layers include:

- ISP-level OCAs

- Regional caches

- EVCache for metadata

- Client-side caching

- Predictive caching using ML

A layered system guarantees fast access to popular content.

How Netflix Reduces Bandwidth Costs and Improves Quality

Netflix uses optimized video formats and caching to lower delivery costs.

Cost-saving strategies:

- HEVC and AV1 encoding

- Chunk-level TTL optimization

- Parallel reads

- Adaptive bitrate streaming

- Predictive content placement

This reduces cloud egress costs while improving video quality.

What This Architecture Actually Means If You're Building a Platform

Most companies are not Netflix. But the architectural decisions Netflix made are instructive even at smaller scale, because many of them were made precisely because Netflix's original simpler architecture failed.

Start stateless. Netflix's original monolith stored session state on individual servers, which made scaling painful. Every new microservice they built was stateless by design — any instance could handle any request. This is easier to do from the start than to retrofit later.

Separate your control and data planes early. The AWS / Open Connect split looks obvious in retrospect, but most architectures couple their business logic tightly to their data delivery. Separating them lets you scale, optimize, and change each independently.

Cache at the edge, not just at the database. EVCache and Open Connect both exist to avoid hitting origin systems for every request. The closer your cache is to your users, the better. For most platforms, this means picking a CDN with good regional coverage and actually using it for static and semi-static content, not just images.

Build for failure. Netflix's Chaos Engineering team deliberately kills production services to test whether the rest of the system can absorb the failure. Most teams can't justify that, but the underlying principle — design your system assuming components will fail, not hoping they won't — is universally applicable.

Observe everything. Netflix ingests trillions of telemetry events daily. They know exactly what caused every buffering event, every failed play, every quality switch. That observability is why they can ship changes continuously without breaking the user experience.

BuildNexTech applies these principles to help organizations modernize, adopt cloud-native patterns, and scale reliably.

Designing for Scalability From Day One

Planning for scale prevents performance issues and major re-architecture efforts later. Teams can set a strong foundation using:

- Stateless services that allow easy replication

- Horizontal scaling instead of relying on large servers

- Cloud-native compute that adjusts based on traffic

- Global CDNs to deliver content closer to users

- Elastic load balancing to distribute user requests smoothly

A clear scaling strategy ensures the platform continues to perform even as traffic and data volumes grow.

Implementing Distributed Systems for Reliability

Distributed systems remove single points of failure and keep applications available under unpredictable conditions. Key reliability patterns include:

- Multi-region failover to withstand regional outages

- Distributed databases for consistent global performance

- Automatic failover to shift traffic instantly during disruptions

- Parallel reads to speed up data access

- Chaos Engineering to proactively test and strengthen system resilience

This approach guarantees uptime and stability—even during traffic spikes, hardware failures, or network issues.

Using Microservices to Accelerate Innovation

Microservices enable rapid delivery and experimentation.

Innovation benefits:

- Independent deployments

- Faster iteration

- Smaller failure domain

- Technology diversity

- Efficient scaling

This supports continuous modernization and rapid growth.

Conclusion—Key Takeaways for Engineers and Businesses

Netflix works because of two clean separations: control from data, and logic from delivery. AWS handles the intelligence — who you are, what you're allowed to watch, what server should serve you. Open Connect handles the last mile — the actual video, sitting inside your ISP's building before you even ask for it. Microservices mean the recommendation engine going down doesn't stop you from watching. Adaptive bitrate streaming means poor network conditions degrade quality gracefully instead of crashing playback. Chaos Engineering means the team has already survived the failures they haven't had yet.

None of this was designed all at once. It was built incrementally, usually as a response to a specific failure mode. The 2008 database outage forced the move to AWS. Monolith scaling limits forced the microservices split. Bandwidth costs forced Open Connect. Good architecture usually comes from solving real problems, not from planning in the abstract.

Netflix’s architecture is a gold-standard example of global scalability, cloud-native engineering, and user-first design. Its distributed systems, caching, microservices, and load balancing strategies form a playbook for any modern digital business.

Final takeaways:

- Distributed systems boost reliability and global reach

- Microservices accelerate innovation

- CDNs + caching reduce latency and cost

- Adaptive streaming ensures high-quality playback

- Cloud-native infrastructure improves agility

- Telemetry and ML unlock continuous optimization

At BNXT.ai, we help engineering teams design and implement cloud-native architectures — distributed systems, microservices, CDN strategy, and observability pipelines — without the 8-year migration timeline Netflix needed. If you're building an OTT product, a high-traffic API platform, or migrating off a monolith, talk to us.

People Also Ask

How does Netflix stream to millions of users at the same time?

Netflix uses a globally distributed network called Open Connect (OCN) — their own CDN. Instead of delivering video from a central server, Netflix places cached copies of shows inside ISPs around the world. This reduces distance, improves speed, and supports millions of concurrent streams.

What is Netflix’s microservices architecture?

Netflix runs hundreds of microservices, each responsible for a small, independent function — like recommendations, playback, authentication, billing, user profiles, etc. These services communicate through APIs and are deployed across multiple data centers. This makes the system fault-tolerant and scalable.

Why did Netflix move toward a modular, service-driven architecture?

Instead of relying on one large application, Netflix adopted a modular service model where each component handles a focused responsibility — such as search, playback setup, user history, device management, or recommendations. This structure helps teams ship updates faster, isolate failures, and scale individual components based on demand without affecting the rest of the system.

What database systems does Netflix use?

Netflix uses a mix of databases: Cassandra for distributed storage DynamoDB for high-availability NoSQL operations MySQL for relational use cases ElasticSearch for search Using multiple systems helps reduce bottlenecks.

How does Netflix maintain smooth playback even on slow networks?

Netflix combines smart encoding techniques and network-aware delivery. The platform generates multiple versions of every video, predicts likely bandwidth drops, and switches quality levels instantly using ABR. On top of that, local ISP-level cache servers reduce travel distance, while background preloading minimizes interruptions during viewing.

.webp)

.webp)

.webp)