Most teams wire up an LLM, watch latency spike, and blame the model. Usually, it's not the model. It's the event layer - the part that decides when the AI runs, and on what.

Webhooks fix this. They fire the moment something happens. Not on a schedule. Not when a user manually triggers something. The second a payment fails, a form submits, or a repo gets a push, your pipeline wakes up. This guide covers how to actually build that pipeline, from endpoint to action.

What Are Webhooks and Why They Matter in Event-Driven Systems

Webhooks are HTTP callbacks. When anything happens in System A, a POST request is sent to a URL that has been defined in System B by you. No polling. No waiting. The event finds you.

Polling burns compute and adds delay. A webhook fires the moment a payment clears, a form submits, or a deployment completes - your system reacts in milliseconds instead of minutes.

.webp)

How Webhooks Work in Production Systems (Step-by-Step Flow)

Here's what actually happens when a webhook fires:

- An event occurs in the source system (e.g., Stripe payment confirmed).

- The source sends an HTTP POST to your registered webhook URL.

- Your server receives the payload (usually JSON).

- You validate the signature (HMAC-SHA256 is standard).

- You enqueue the payload for async processing.

- You return 200 OK within 5 seconds, or the sender retries.

The 5-second rule is where most teams trip up. If your LLM call happens synchronously inside the webhook handler, you will hit timeouts. Acknowledge fast, process async.

python

from flask import Flask, request, jsonify

import hmac, hashlib, json

from tasks import process_with_llm

app = Flask(__name__)

WEBHOOK_SECRET = "your_secret_here"

@app.route("/webhook", methods=["POST"])

def handle_webhook():

signature = request.headers.get("X-Signature-256", "")

payload = request.get_data()

expected = "sha256=" + hmac.new(

WEBHOOK_SECRET.encode(), payload, hashlib.sha256

).hexdigest()

if not hmac.compare_digest(signature, expected):

return jsonify({"error": "Invalid signature"}), 401

data = json.loads(payload)

process_with_llm.delay(data) # offload to queue

return jsonify({"status": "received"}), 200

Webhook vs API: Key Differences, Use Cases, and When to Use Each

The webhook vs API question comes down to who initiates communication. REST APIs are pull-based - your app requests data when it needs it. Webhooks are push-based - the source notifies you when something changes.

For LLM pipelines, webhooks win almost every time. You want the AI to respond to what just happened, not a stale poll from 30 seconds ago.

.webp)

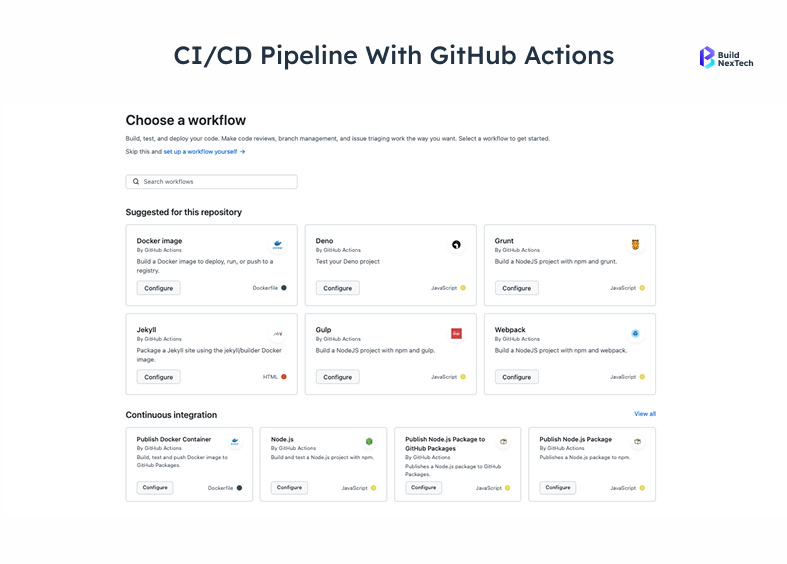

Where Are Webhooks Used in Real Production Systems?

Webhooks run core business flows across every major platform:

- Stripe fires on payment success, dispute opened, subscription cancelled.

- GitHub triggers on push, PR merge, and issue created - CI/CD pipelines run on these.

- Webhooks: Discord bots receive events on new messages, reactions, and member joins.

- HubSpot / Salesforce push contact updates to downstream systems in real time.

Each is a potential LLM trigger. A Stripe payment_failed event can run an LLM to draft a personalized recovery email. A GitHub push can trigger a code review agent.

How to Test Webhooks Before Pushing to Production

You need a public URL that your source system can reach during development. Tools that work:

Don't skip signature validation in testing. Most production webhook failures trace back to signature issues that are never caught locally.

Where LLMs Fit in Real-Time Systems (Beyond Basic Automation)

Static automation - if X then Y - breaks the moment something doesn't fit the mold. A cancellation reason written in free text. A support ticket that's technically low priority, but the customer is furious. A sales email that's half complaint, half question. You can't write a rule for every variation. An LLM doesn't need one.

From Static Automation to Intelligent Event Processing with LLMs

The difference in practice:

Static: send_email(template="cancellation_confirmed")LLM-powered:

python

reason = event.data.get("cancellation_reason", "")

prompt = f"Customer cancelled. Reason: '{reason}'. Write a short empathetic response offering to help."

response = llm.complete(prompt)

send_email(body=response)The second version handles "too expensive," "found a better deal," and "accidental order" differently - without a rule for each. This is what LLMs bring to event-driven systems: judgment-in-the-moment.

Common Architectures for LLM Integration in Backend Systems

For most teams starting, the queue pattern is the right default. It decouples receipt from processing and prevents LLM latency from blocking your webhook endpoint.

Connecting Webhooks to LLMs: Real-Time AI Pipelines Explained

The architecture is straightforward once you stop trying to do everything synchronously. Webhook fires → acknowledge → enqueue → worker picks up → LLM processes → action executes. Each step has one job.

At bnxt.ai, this same pattern runs across multiple client workflows - sales automation, support routing, lead enrichment. Different use cases, same pipeline shape. That's the point.

How Webhooks Trigger LLM Workflows in Real-Time Systems

Source System

|

HTTP POST (event payload)

|

Webhook Endpoint (validate → enqueue → 200 OK)

|

|

Message Queue (Redis / SQS / RabbitMQ)

|

The worker picks up a job.

|

LLM Processing Layer

|

|

Action Layer (email, DB write, Slack message, API call)Each layer has one responsibility. The webhook endpoint doesn't know about LLMs. The LLM layer doesn't know about webhooks. This separation is what lets you swap components without rewriting the system.

.webp)

Production Use Cases: Slack Bots, CRM Automation, AI Agents

Slack bots are the most common starting point - a new message fires a webhook, the LLM figures out what the user actually wants, and the bot routes or responds accordingly. Simple to build, immediately useful.

- CRM automation is where it gets more interesting. A contact update in HubSpot triggers a webhook, and instead of a static lead score, you get an LLM that reads the context - recent activity, company size, message tone - and makes a call on priority. In practice, LLM-scored leads surface context that numeric rules miss message tone, recency, company signals which reduces the follow-up gaps that static scoring creates.

- On the e-commerce side, abandoned cart recovery is the obvious use case, but post-cancellation is more valuable.

- Code review agents and incident response bots follow the same pattern: event fires, LLM reads context (diff, logs, alert details), produces something a human can act on immediately.

Event-Driven Architecture - The Engine Behind Real-Time AI Triggers

Event-driven architecture (EDA) is a design pattern where services communicate by producing and consuming events through a central broker, rather than calling each other directly.

Webhooks are point-to-point. The event-driven architecture (EDA) extends that concept to systems consisting of hundreds of consumers and producers. Events flow through a central broker, and any service can subscribe.

Why Event Triggers Are Critical in High-Volume Business Systems

At low volume, polling is fine. At 10,000 events per minute, it collapses. Event triggers let you process each event exactly once, in order, without hammering your database.

Good event trigger design means your LLM only wakes up when it's actually needed - not on every database write.

Kafka, Event Streams, and LLM Integration Patterns

Apache Kafka is the standard for high-throughput event streaming. Events go into topics. LLM workers subscribe and process at their own pace. Kafka also gives you replay - if your pipeline fails at 2 am, you replay the stream from the failure point.

Server-sent events (SSE) are a lighter alternative for streaming LLM output back to a client in real time, useful when you want responses to stream word-by-word.

Setting Up Event Triggers in PostgreSQL for AI Pipelines

For row-level changes, use pg_notify to push events directly to a listening worker:

sql

CREATE OR REPLACE FUNCTION notify_ai_pipeline()

RETURNS trigger AS $$

BEGIN

PERFORM pg_notify(

'ai_pipeline_events',

json_build_object(

'table', TG_TABLE_NAME,

'action', TG_OP,

'record', row_to_json(NEW)

):: text

);

RETURN NEW;

END;

$$ LANGUAGE plpgsql;

CREATE TRIGGER orders_ai_trigger

AFTER INSERT OR UPDATE ON orders

FOR EACH ROW EXECUTE FUNCTION notify_ai_pipeline();

Your Python worker listens on ai_pipeline_events and routes to the LLM when relevant rows change.

When Should You Use EventStore DB Instead of a Traditional Database?

EventStore DB (event sourcing database) stores every change as an immutable event. For LLM pipelines that need to reason over history - "why did this customer churn?" - having the full event stream as context beats querying a current-state database. CQRS pairs well here: commands write events, queries read projections - and your LLM sits on the query side, pulling event context to answer questions a current-state snapshot can't.

Implementation - Production-Grade Webhook + LLM Workflows Production-grade architecture is not that difficult.

The architecture is the easy part. Production reliability is where most teams underestimate the work. Retries, idempotency, failure modes, scaling - these aren't optional. They're what separates a demo from a system that runs at 3 am without you.

Common Mistakes When Integrating Webhooks with LLM Systems

- The mistake I see most often: calling the LLM synchronously inside the webhook handler. Your handler needs to return in under 5 seconds, or the sender retries. GPT-4o can take 8–15 seconds on a long prompt. The fix is always the same - enqueue, return 200, process async.

- The second one that causes real pain is missing idempotency checks. Webhook senders retry on any non-2xx or timeout. Without idempotency, that retry fires the LLM again - sends a second email, creates a duplicate record. Store the event ID, check before processing, and skip if already handled.

- A few others worth watching:

- No signature validation means anyone can POST garbage to your endpoint.

- No dead letter queue means failed events vanish with no trace.

- No rate limiting on LLM calls turns a traffic spike into a billing emergency.

Workflow Builders vs Custom Pipelines: Trade-offs for AI Automation

The n8nAii workflow builder is the right call for connecting Slack to GPT-4 with five nodes. For a system processing 50,000 webhook events per day with custom routing, you want a custom pipeline. Use AI automation tools to move fast, then migrate critical paths to code when you hit the limits.

Step-by-Step: Building a Webhook → LLM → Action Pipeline

- Register your webhook URL with the source system (Stripe, GitHub, HubSpot, etc.).

- Build the endpoint - validate signature, return 200, enqueue payload.

- Set up a queue - Redis + Celery, SQS, or RabbitMQ.

- Write the worker - dequeue, build prompt, call LLM via api integration.

- Define the action layer - email, DB write, Slack message.

- Add idempotency - store processed event IDs, skip duplicates.

- Add a dead letter queue - failed events go here for replay.

- Monitor - log every event, every LLM call, every action.

Steps 6, 7, and 8 are where most tutorials stop. Don't skip them.Handling Failures, Retries, and Idempotency in Webhook Systems

Without idempotency, retries cause duplicate processing. Fix it with a simple Redis check:

python

@app.task(bind=True, max_retries=3)

def process_with_llm(self, event_data):

event_id = event_data.get("id")

if r.get(f"processed:{event_id}"):

return # already handled

try:

result = call_llm(event_data)

execute_action(result)

r.setex(f"processed:{event_id}", 86400, "1")

except Exception as exc:

raise self.retry(exc=exc, countdown=2 ** self.request.retries)

Exponential backoff (2s, 4s, 8s) prevents retry storms from hammering your LLM provider.

What Causes Performance Bottlenecks in AI Pipelines and How Do You Fix Them?

The cheapest optimization is prompt trimming. Most webhook payloads are 80% fields your LLM doesn't need. Strip them before the model call - cuts cost and latency at the same time.

Conclusion - Putting It Together: Webhooks + LLMs in Production

The pipeline itself isn't complex. Endpoint, queue, worker, action - most teams have this running in a day or two. What takes longer is getting the boring parts right: Idempotency and dead letter queues are non-negotiable retries will happen, and failures need a trace. Signature validation and monitoring close the loop: one keeps random traffic from burning your LLM budget, the other tells you something broke at 3 am before a customer does.

That's the actual work. The AI part is almost the easy part once the infrastructure is solid.

If you're building event-driven AI pipelines and want the infrastructure done properly from the start, bnxt.ai works with engineering teams on webhook-to-LLM pipelines specifically from endpoint design through to production observability.

People Also Ask

1. How much does it cost to run a webhook-triggered LLM pipeline in production?

GPT-4o runs roughly $0.005 per 1K output tokens. At 10,000 events/day with 500-token responses, that's around $25/day. Claude and Gemini are cheaper at comparable quality. Infrastructure (queue + workers) adds $20–50/month. Costs stay predictable once you trim prompts to essential fields only.

2. Can webhooks handle high-frequency events without dropping data or causing LLM overload?

The endpoint is rarely the bottleneck — it just needs to return fast and hand off to a queue. Where things actually break under load is the LLM layer: provider rate limits (OpenAI's Tier 1 limits cap at 500 RPM for GPT-4o — lower than most teams building event pipelines expect) and worker saturation if you haven't scaled horizontally. A token bucket rate limiter on the worker and a dead letter queue for overflow handle most of this.

3. Do I need a dedicated server to receive webhooks, or can I use serverless functions?

Serverless (AWS Lambda, Cloudflare Workers) works well for webhook endpoints - fast, auto-scaling, cheap. The catch: serverless caps execution time at 10–30 seconds, which is fine for acknowledgment but not synchronous LLM calls. Use serverless for receipt, persistent workers for LLM processing.

4. How do I secure webhook endpoints from unauthorized or spoofed requests?

Three layers: validate HMAC-SHA256 signatures on every request, whitelist source IPs where your provider publishes them (Stripe and GitHub both do), and use HTTPS only. Add rate limiting to prevent brute-force guessing. Reject invalid requests with 401, not 200.

5. Which LLM works best for low-latency webhook-triggered responses - GPT-4, Claude, or Gemini?

GPT-4o mini and Claude Haiku are the fastest short-answer responses (less than 1s). GPT-4o and Claude Sonnet handle complex tasks better. Most production systems use a tiered approach - a fast model for classification, a stronger model for tasks that actually need it. Don't route every event through GPT-4.

.png)

.webp)

.webp)

.webp)

.webp)