Generative AI and large language models have changed how developers build software. Tasks that once required hours of effort can now be completed in minutes. But speed alone isn’t enough. A major challenge still remains—making sure AI responses are accurate and grounded in real, reliable information rather than just learned patterns.

That’s where Retrieval-Augmented Generation (RAG) comes in. Instead of depending only on what the model was trained on, RAG pulls relevant data from trusted sources like internal documents and knowledge bases before generating a response. The result is smarter, more reliable, and context-aware output you can actually trust.

Using the power of embedding models, vector embeddings, and vector databases, RAG brings a robust level of contextual understanding to code generation tasks. This enables more accurate AI-powered coding assistants and enterprise-grade AI solutions that are more representative of real-world coding scenarios.

For a deeper look at how intelligent automation is transforming enterprise workflows, read

Mastering Agentic AI: How Intelligent Agents Are Redefining Workflows

In this blog, we will delve into the world of RAG, exploring how it works, the underlying technologies, real-world applications, advantages, disadvantages, and the future of context-aware code generation.

Foundations of Retrieval-Augmented Generation

Retrieval-Augmented Generation is at the nexus of natural language processing, semantic search, and information retrieval systems. Rather than just using the knowledge that is encoded in an AI model, RAG brings in a retrieval component that uses dense vector embeddings and semantic meaning to find relevant context.

This means that the answers that are generated are not only fluent but also based on real information, which is important for relevance and reliability in applications such as enterprise search, question answering, and code completion.

RAG Explained in Simple Terms

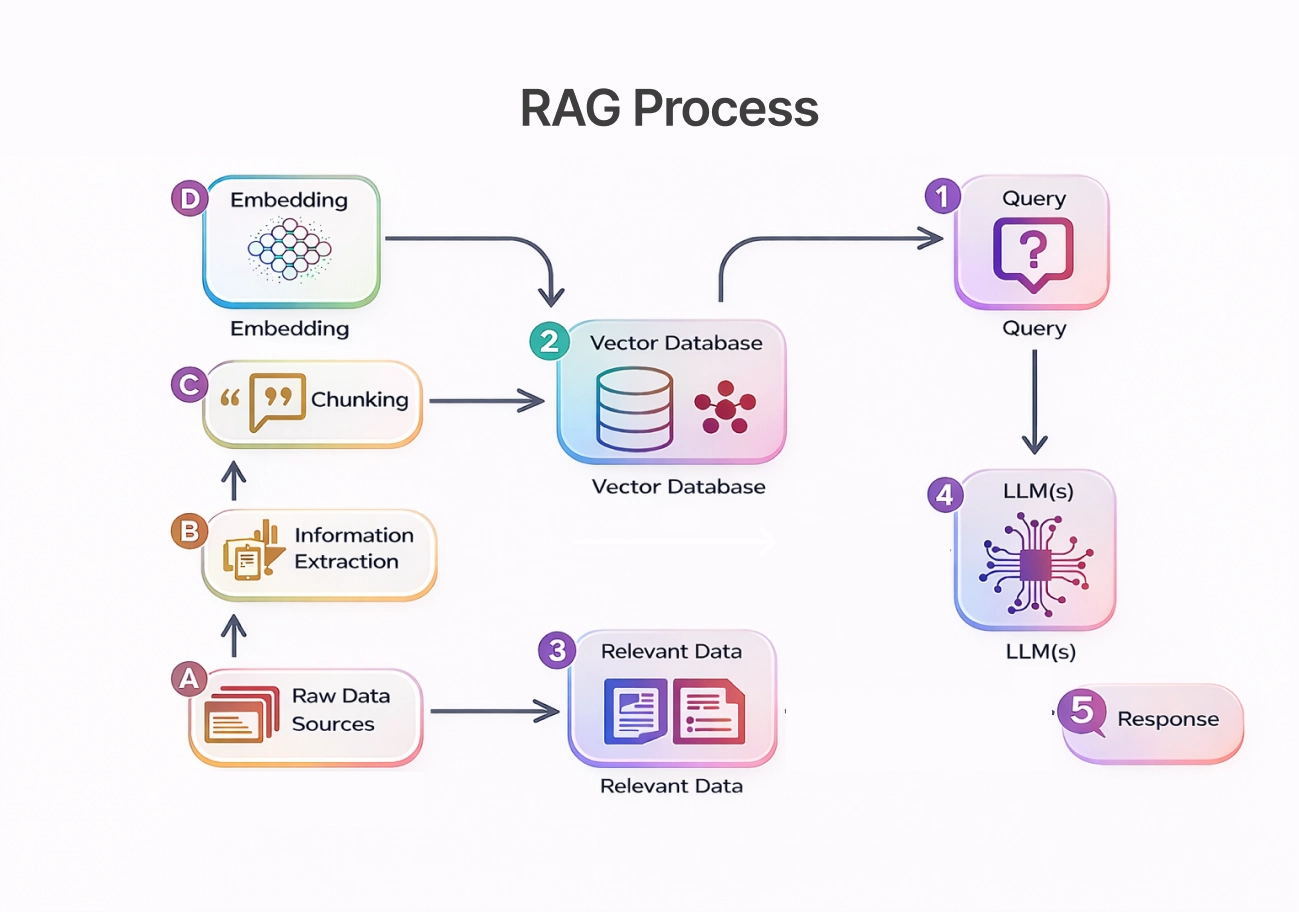

Think of RAG as a two-step intelligence process:

- Search first — The system performs semantic search across a vector index or document store to find relevant information.

- Generate next — A sequence-to-sequence generation model or text-to-text transformer relies on the context retrieved to generate the final answer.

The above enables the system to convert structured knowledge into meaningful outputs while retaining semantic understanding.

Why Context Matters in AI-Generated Code

In real-world applications, the code has to be consistent with the internal docs, technical manuals, and regulatory requirements. Without context, AI can generate code that appears to be correct but is not consistent with the project architecture.

With RAG:

- AI can reference private data securely

- Outputs reflect project-specific knowledge structures

- Factual hallucinations are significantly reduced

- Developers gain higher confidence in generated solutions

This context-aware approach turns AI from a general assistant into a domain-specific collaborator.

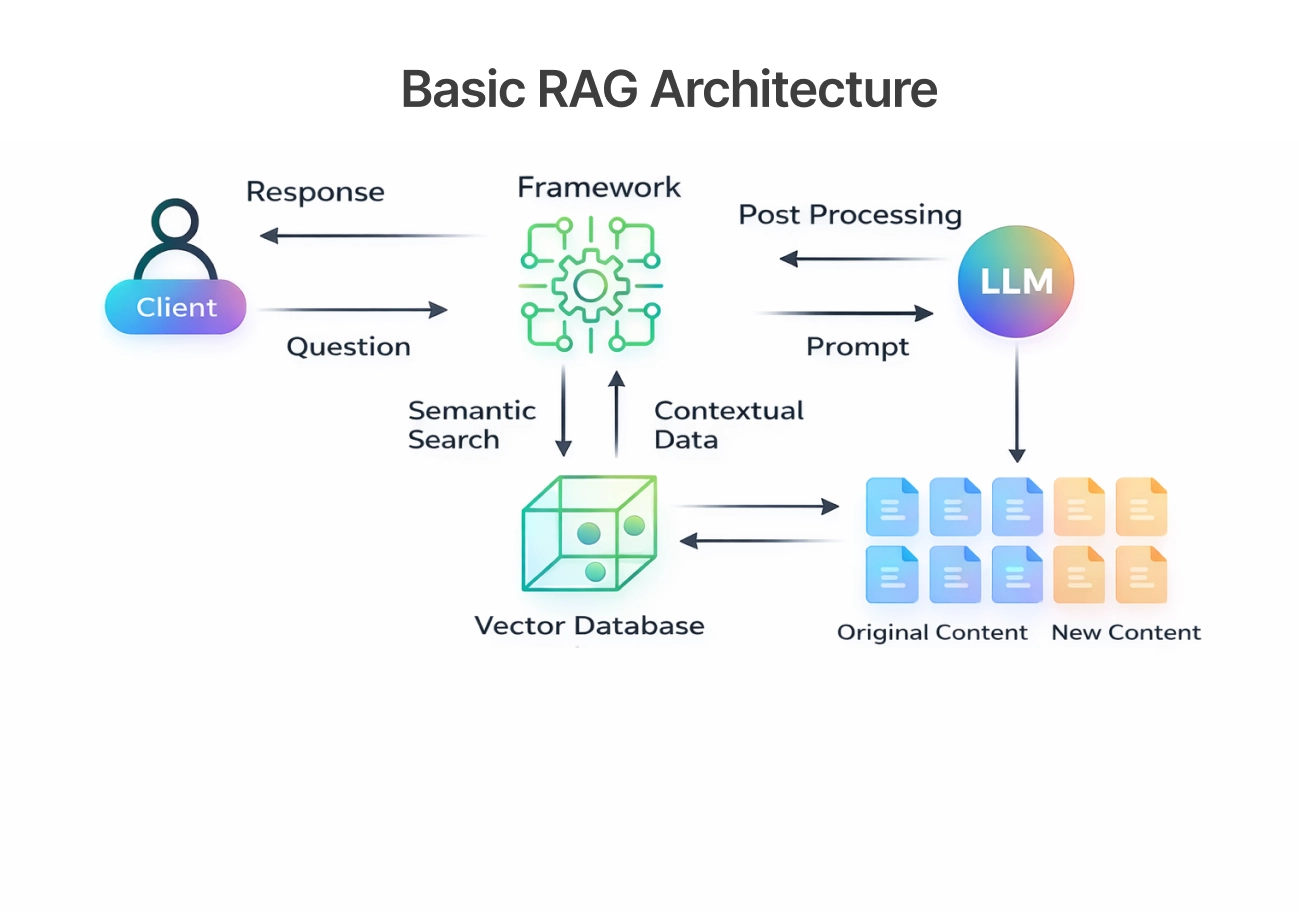

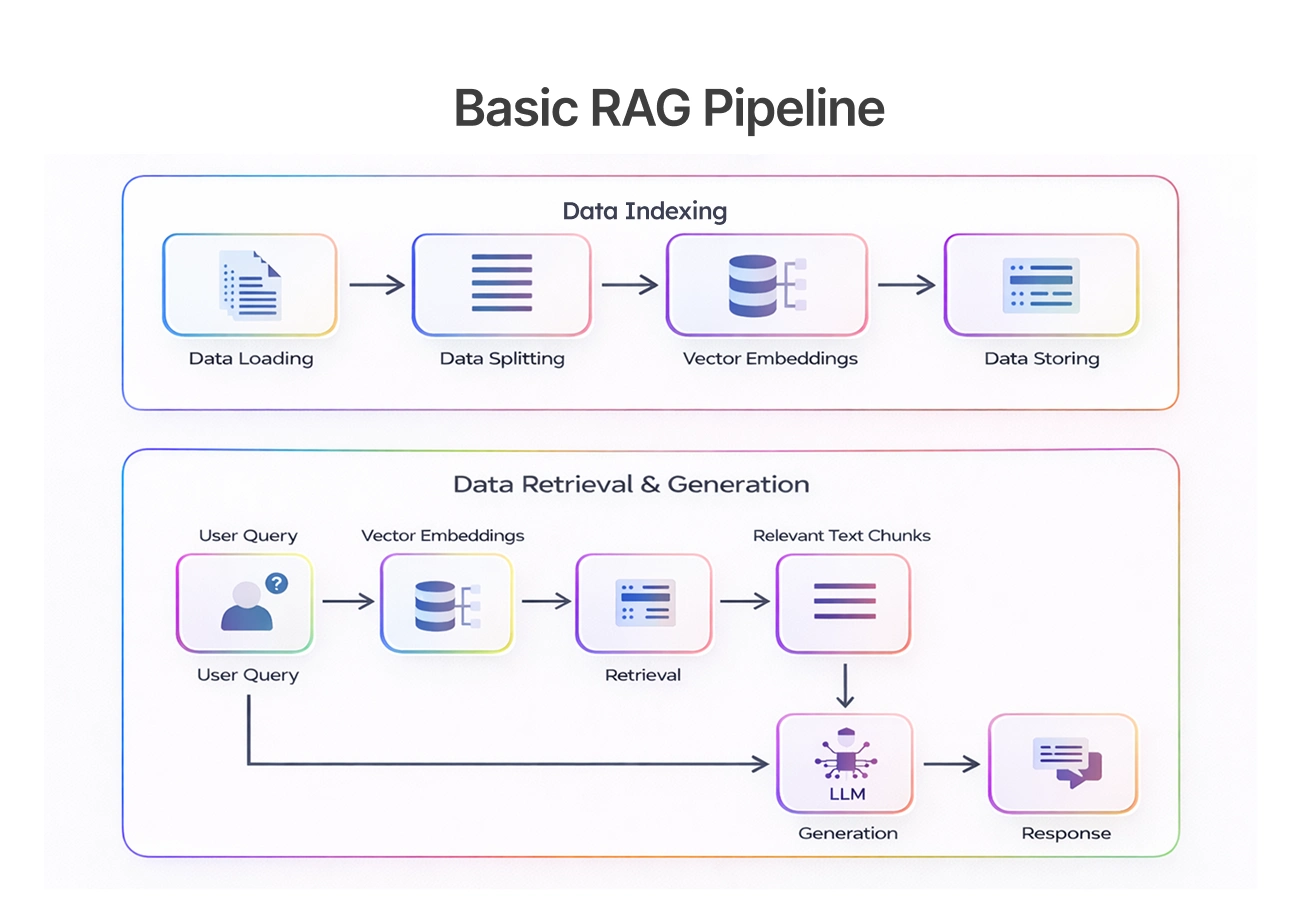

Workflow of a RAG Pipeline

A typical RAG pipeline includes multiple coordinated components:

- User Query processing using NLP

- Retriever module performing dense retrieval

- Searching a vector-based database or federated vector database management system

- Context aggregation using fusion strategies

- Response Generation via sequence-to-sequence generators

- Optional source attribution and validation

Advanced pipelines may also include multi-hop retrieval, cross-encoder transformers, and neural information retrieval to improve precision.

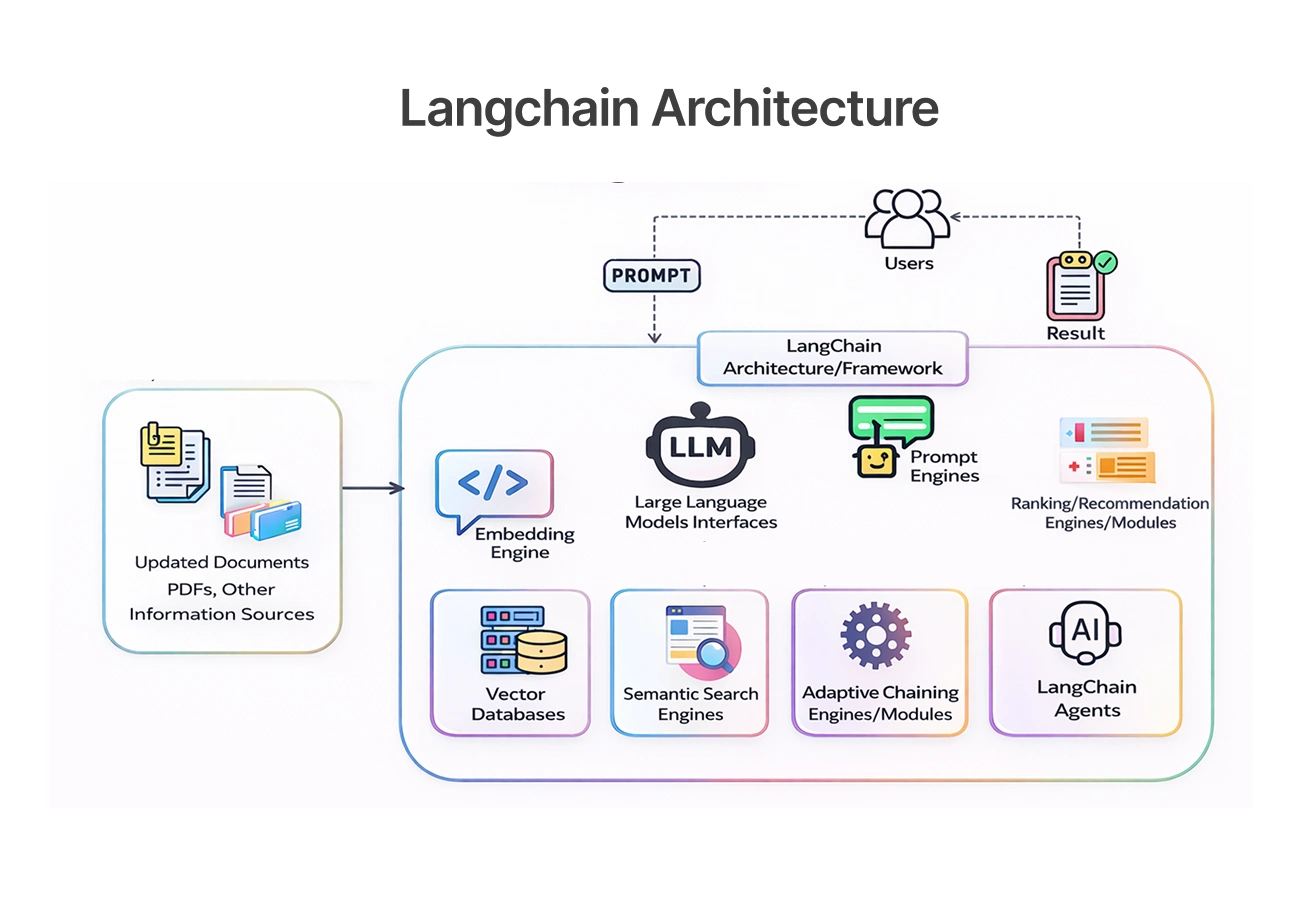

LangChain as the Backbone of RAG Applications

Libraries such as LangChain are essentially the glue that binds the retrieval components, the prompts, and the generation models together in a fluid motion. This allows developers to focus on implementing interesting features rather than pipeline management.

Because of its seamless integration with vector databases, knowledge graphs, and neural retrievers, LangChain has become an integral part of any team that is building a scalable enterprise AI solution.

See how AI technologies are being applied in real-world software solutions in

AI in Mobile App Development: Examples and Benefits

Introduction to the LangChain Ecosystem

LangChain supports:

- Document ingestion from Word docs, Notion documents, and internal documents

- Creation of semantic memory using embeddings

- Integration with vector indexes

- Agents that perform multi-hop retrieval

This ecosystem allows teams to build sophisticated interactive AI solutions quickly.

Practical Use Cases of LangChain in Code Generation

Common use cases include:

- AI coding assistants integrated into IDEs

- Automated testing pipelines

- Developer knowledge search tools

- Documentation summarization systems

These applications demonstrate how RAG improves productivity while maintaining accuracy.

Connecting LangChain with Conversational AI

When combined with conversational interfaces, LangChain enables persistent context through semantic chunking and memory modules.

This improves retrieval quality, allowing developers to ask follow-up questions, refine outputs, and collaborate with AI in a natural workflow.

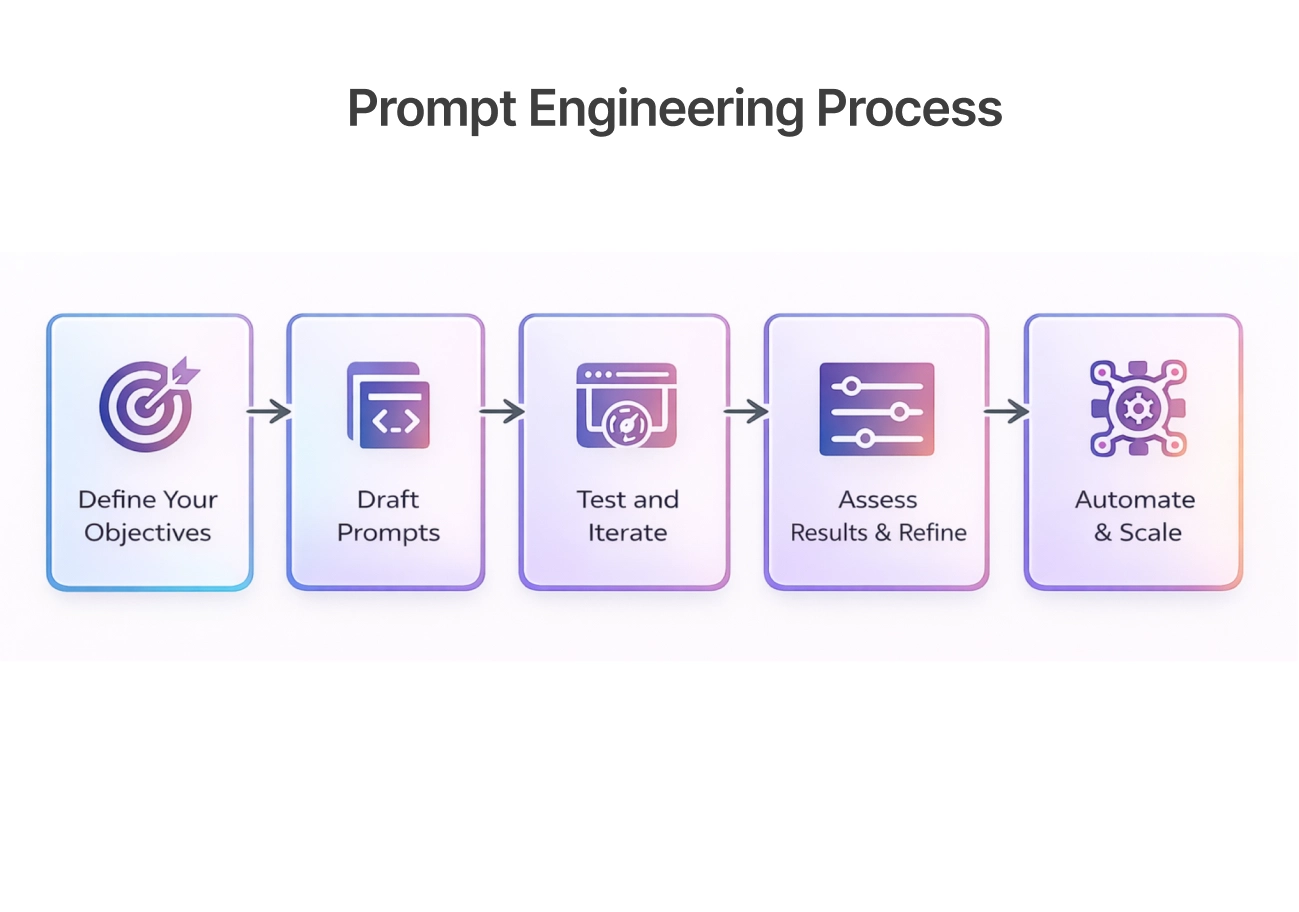

Prompt Engineering as a Critical Layer

Even with advanced retrieval systems, prompt engineering remains essential for achieving high-quality results. Prompts determine how retrieved context is interpreted and how the model structures its response.

Effective prompts ensure that semantic meaning and knowledge verbalization are aligned with the user’s intent.

Role of Prompts in RAG Systems

Prompts act as the instruction layer that defines:

- How retrieved knowledge should be used

- Expected output format

- Tone and level of detail

They bridge the gap between raw retrieval and meaningful output.

Practical Techniques for Better Prompts

Proven techniques include:

- Few-shot prompting

- Step-by-step reasoning instructions

- Structured output schemas

- Constraints to reduce hallucinations

These methods improve consistency and reliability across tasks.

Learning Pathways for Prompt Engineering

To build expertise, developers should:

- Experiment with prompt variations

- Use evaluation metrics

- Study model responses

- Continuously refine templates

Prompt engineering is quickly becoming a core skill in AI-driven development.

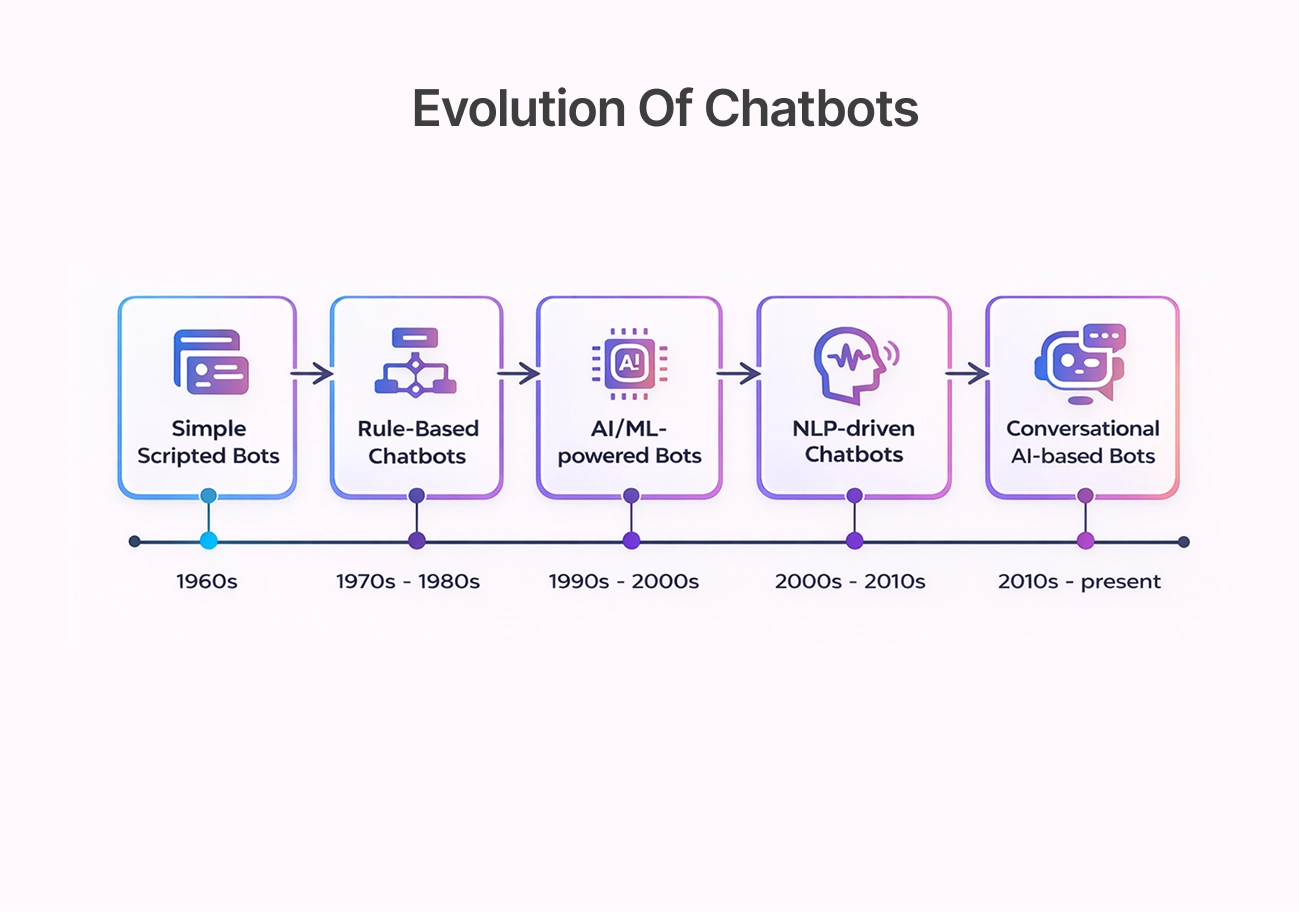

Chatbots as a Major Application of RAG

RAG has greatly enhanced customer support and enterprise search by allowing chatbots to search customer support logs, legal texts, and technical manuals in real time.

The systems employ semantic understanding and retrieval modules to offer correct responses, thus alleviating the need for manual support.

Evolution of Chatbots in Development

Chatbots have evolved through multiple stages:

- Rule-based bots

- NLP-driven assistants

- LLM-powered conversational agents

- RAG-enabled intelligent assistants

Modern systems leverage latent retrieval models and neural retrievers for deeper contextual understanding.

Building Intelligent Chatbots with RAG

A typical architecture includes:

- Data ingestion and semantic chunking

- Creation of dense vector embeddings

- Retrieval using models like DensePassage Retriever

- Generation using machine reader models

This pipeline ensures accurate and context-aware interactions.

Industry Adoption and Innovation

RAG chatbots are widely used for:

- IT help desks

- Customer service bots

- Clinical trial screening

- Knowledge assistants

They enable faster decision-making and improved user experience.

Generative AI Tools and the Expanding Ecosystem

The RAG ecosystem is sustained by an emerging array of sophisticated tools such as Lucidworks AI, NVIDIA Nemotron, NVIDIA NeMo Retriever, NVIDIA NeMo Curator, and NVIDIA cuVS. These tools enable teams to handle multimodal data, structure information using clustering, and construct scalable retrieval pipelines that drive real-world applications.

Practical implementations of AI-powered systems and real-time intelligence can be explored in

How to Master Real-Time Navigation with Google Maps AI

As the RAG ecosystem continues to develop, organizations have even more chances to develop domain-specific RAG solutions that are not only more scalable but also more suited to their specific business needs.

Benefits of Generative AI for Developers

Key benefits include:

- Faster development cycles

- Automated documentation

- Improved semantic search

- Enhanced collaboration

- Better debugging support

These advantages allow teams to focus on innovation rather than repetitive tasks.

Popular Tools and Platforms

Common components include:

- Vector databases

- Knowledge graphs

- Neural retrieval models

- AI coding assistants

Together, they form the backbone of modern AI development.

Fine-Tuning and Customization

Customization strategies include:

- Transfer learning

- Domain-specific RAG pipelines

- Privacy-preserving retrieval

- Security benchmarking

These approaches ensure AI systems align with organizational requirements.

Benefits and Challenges of Using RAG

Advantages of RAG for teams:

- Enhances accuracy, leading to more reliable results

- Reduces the risk of AI hallucinations

- Keeps outputs relevant to the given context

- Supports more effective and informed decision-making

Challenges organizations need to address:

- Maintaining high-quality, up-to-date data

- Managing privacy and security concerns

- Minimizing latency to ensure smooth performance

- Ensuring proper provenance and traceability

To get the most value from RAG, organizations must balance strong performance with reliability and governance.

Future Outlook for Context-Aware Code Generation

The future RAGs will be much more than just text retrieval. The integration of neural hybrid search, multimodal data, and more complex knowledge graphs will enable these models to think and comprehend information in a manner similar to humans.

On the other hand, advancements in multi-document readers, cross-encoder transformers, and more sophisticated models of retrieval will enable AI to understand complex workflows, resulting in more intelligent and accurate recommendations.

As domain-specific RAG continues to advance, AI will be able to better understand user intent, context, and project objectives – ultimately becoming more of a partner and less of a tool.

Conclusion

Retrieval-Augmented Generation is a huge leap forward in the development of trustworthy AI systems. With RAG, AI is able to create responses that are based on actual knowledge, not just a shot in the dark.

When it comes to business, implementing RAG is more than just adopting new technology. It means faster workflows, better decision-making, and scalable AI solutions that can easily integrate with current development processes.

Key Takeaways

- RAG combines retrieval and generation for accuracy

- Vector embeddings enable semantic understanding

- Prompt engineering improves reliability

- RAG powers enterprise AI and coding assistants

Strategic Importance for Developers, Teams, and How BNXT Can Help

RAG empowers teams to build intelligent systems that truly reflect their business knowledge—not just generic information. It accelerates innovation while significantly improving software quality and decision accuracy.

BNXT can aid in this endeavor by empowering teams to create effective RAG architectures, build strong enterprise search solutions, and deploy secure AI solutions that integrate effortlessly with their existing infrastructure.

People Also Ask

1. What are the key challenges RAG helps solve in AI systems?

It improves factual grounding, reduces hallucinations, and enables AI to work with domain-specific knowledge.

2. How does LangChain support RAG workflows?

LangChain provides orchestration tools for retrieval, prompts, and memory.

3. How can organizations reduce AI hallucinations in production systems?

By using high-quality knowledge bases, validation layers, and monitoring.

4. Can RAG be used for chatbot applications?

Yes, it powers customer support bots and enterprise assistants.

.png)

.webp)

.webp)

.webp)