Company

About Us

We drive innovation and technological excellence.

Our Founders

Meet the brilliant minds shaping our vision.

Life at BuildNexTech

Experience a culture of growth and creativity.

%201.webp)

Media

Explore our journey and industry success.

Contact Us

Reach out for collaborations and opportunities.

Services

%201.webp)

Web Development

Create powerful, scalable, and dynamic web applications tailored to your business needs.

Mobile App Development

Build intuitive, high-performance mobile apps that deliver exceptional user experiences.

Cloud Migration Services

Seamlessly migrate and optimize your infrastructure with AWS, GCP, and Azure.

AI Services

Smart, enterprise AI solutions: custom development, generative AI, and intelligent chatbots.

Low Code

Design and launch custom websites visually without code for rapid deployment.

Business Intelligence

Rapid, secure app development with low-code & no-code tools, speed meets scaling.

Flutter Flow

Build cross-platform mobile apps quickly with visual Flutter-based development.

Resources

Blogs

Explore insights and expertise driving innovation in technology and development.

Case Studies

See how industry leaders ensure top-tier software quality and performance.

BI Templates

See how industry leaders use BI templates to make smarter, data-driven decisions.

Newsletters

Stay informed with the latest updates on our innovation and growth-driven culture.

Testimonials

See how businesses across industries achieve success with our expert solutions.

Industries

Find tailored solutions, expert support, and cutting-edge development strategies.

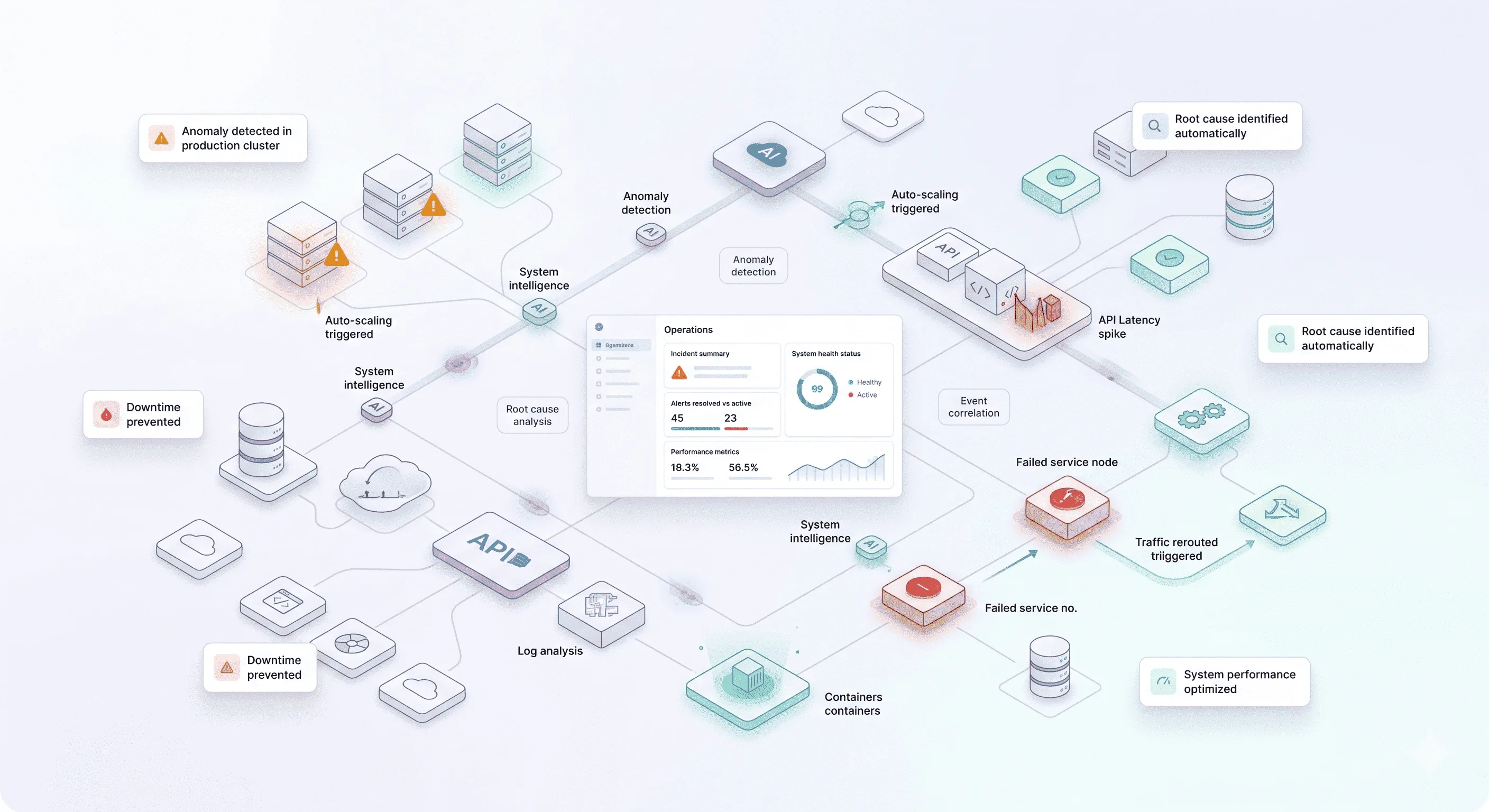

AI Automation

Explore insights and expertise driving innovation in technology and development.

.png)

Automate Workflow

Explore insights and expertise driving innovation in technology and development.

.webp)

.png)

.webp)

.webp)

.webp)